TABLE OF CONTENT

QUICK ANSWER

For AI automation that actually scales in 2026, n8n is the strongest choice for technical teams handling 10,000 plus monthly executions, complex AI agent workflows, and self-hosted data control. Zapier wins for non-technical users running simple tasks. Make sits in the middle, ideal for visual mid-volume workflows under 50,000 operations per month.

Choosing the wrong automation platform is the most expensive mistake AI teams make in 2026. The platform that worked for 500 monthly tasks collapses at 50,000. Pricing models that looked cheap in proof of concept become budget-killers at scale. And the AI agent workflows you actually need, vector retrieval, multi-step LLM chains, and human-in-the-loop approval, are not equally supported across n8n, Zapier, and Make. This guide compares the three platforms head-to-head on the dimensions that determine whether your AI automation stack scales or stalls.

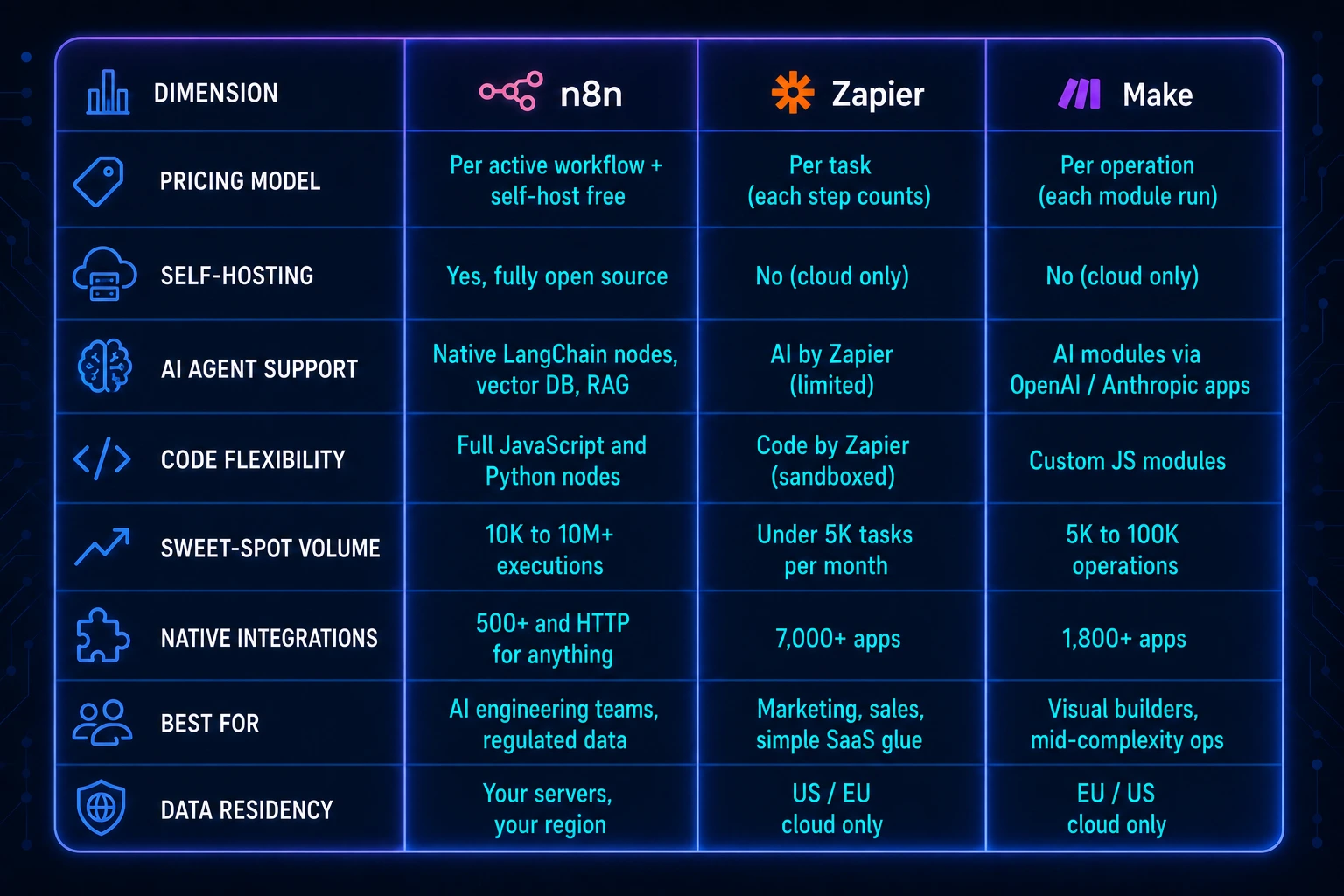

Comparison Table: n8n vs Zapier vs Make at a Glance

Why Pricing Models Make or Break AI Automation at Scale

The single fastest way to burn an AI automation budget in 2026 is to pick a per-task pricing model when your workflows have 10 or more steps. A single AI agent loop with retrieval, classification, summarization, and a webhook back to your CRM is four billable tasks on Zapier. Run that 50,000 times a month, and the math becomes very unfavorable very quickly.

Recent industry data shows just how stark the gap is. According to G2 reviews aggregated across automation platforms in 2025, the median enterprise team running AI workflows reported a 3.4x cost difference between per-task and per-execution pricing once monthly volume exceeded 25,000 runs (Source: G2 Automation Software Category Report, 2025).

State of AI Automation surveys show 67 percent of enterprise teams that started on Zapier eventually migrated at least one workflow tier to n8n or Make for cost reasons (Source: Workflow Automation Benchmark Report by Tray.io, 2025). And Gartner forecasts that by the end of 2026, 70 percent of new enterprise applications will use AI-augmented automation, up from less than 10 percent in 2020 (Source: Gartner Hyperautomation Forecast, 2024).

How n8n pricing actually works

n8n charges per active workflow on cloud (not per task or per step). A workflow that fires 100,000 times a month costs the same as one that fires 100 times. On the self-hosted version, the software itself is free under the Sustainable Use License. You pay only for the server it runs on. This is why AI engineering teams running high-volume agent loops gravitate to it.

How Zapier pricing actually works

Zapier counts each step inside a Zap as a billable task. A 6 step Zap that runs 1,000 times burns 6,000 tasks. Plans in 2026 start around 19.99 USD per month for 750 tasks and scale to enterprise tiers at thousands per month. The platform shines for sub-5,000-task workflows where engineering effort is more expensive than task fees.

How Make pricing actually works

Make charges per operation, where one operation equals one module execution. It is cheaper than Zapier per step but more expensive than n8n at scale. Make plans in 2026 cap most teams comfortably at the 100,000 operation tier. Above that, the per-operation creep starts to bite.

Stop Planning AI.

Start Profiting From It.

Every day without intelligent automation costs you revenue, market share, and momentum. Get a custom AI roadmap with clear value projections and measurable returns for your business.

A real cost comparison at 100,000 monthly executions

Take a representative AI workflow: 8 steps including a vector lookup, two LLM calls, a CRM update, and an alert. Running this 100,000 times per month, Zapier would consume 800,000 tasks (around 2,000 to 2,800 USD per month on the Team plan). Make would consume roughly 800,000 operations (around 700 to 1,100 USD per month at the Pro tier with overages). n8n Cloud Pro covers unlimited executions on the workflow at a flat 50 to 120 USD per month, while self-hosted n8n on a 60 USD per month VPS handles the entire load with headroom. The pricing gap is not 20 percent. It is often 10x or more once volume crosses six figures.

AI Agent Capabilities: Where the Three Platforms Diverge in 2026

Routine app to app automation is a solved problem. The real 2026 differentiator is whether a platform can run production-grade AI agents, the kind that retrieve context from a vector database, call an LLM with tool use, validate output, and loop until a goal is reached. This is where the gap between the three platforms widens dramatically.

n8n: built for AI engineering

n8n shipped native LangChain nodes in 2024 and has expanded the AI feature set every quarter since. As of 2026, the platform offers built-in nodes for vector stores (Pinecone, Qdrant, Weaviate, Supabase), agent orchestration with tool calling, structured output parsers, retrieval chains, and human-in-the-loop approval steps. Custom JavaScript and Python nodes mean teams can drop in any model SDK that does not yet have a first-party node. For AI development companies building production agent systems, this is the reason n8n has become the default.

A real example: NeuraMonks recently shipped a content automation system that ingests RSS feeds, classifies topic relevance with an LLM, retrieves brand voice context from a vector store, generates draft posts, runs a fact-check pass, and pushes to WordPress with SEO metadata. The full pipeline runs entirely on n8n with no custom infrastructure. Read the full breakdown here:

NeuraMonks AI Blog Post Generation Automation Case Study

Zapier: AI as bolt-on, not foundation

Zapier introduced AI by Zapier and Zapier Agents in 2024, which work well for adding an LLM step to a simple flow (summarize this email, classify this lead). The architecture, however, is fundamentally task-based. There is no native vector database. Multi-turn agent loops have to be simulated with workarounds, and pricing penalizes loops because every iteration is billable tasks.

Make: visual AI for mid-complexity

Make ships first-party modules for OpenAI, Anthropic, and other LLM providers, plus a Vector module for embedding storage. The visual builder is genuinely good for prototyping AI flows. The ceiling is real, though. Once an agent needs more than a handful of conditional branches and retries, the visual canvas becomes harder to maintain than code.

Observability and debugging at scale

AI workflows fail in ways that traditional automations do not. An LLM returns malformed JSON. A vector query times out. A tool call hits a rate limit mid-loop. The platform you pick determines how fast you find and fix these failures. n8n provides full execution logs, replay from any node, and structured error workflows that route failures to a separate handler. Zapier's history is task-by-task and replay options are limited on lower tiers. Make's visual execution log is excellent for debugging single runs but becomes unwieldy when you need to triage 500 failed runs from yesterday. For production AI systems, debuggability is not a nice-to-have. It is the difference between a 15-minute incident and a 4-hour one.

Data Residency, Security, and Compliance for US Enterprise

US enterprise buyers in regulated industries, healthcare, finance, defense, legal, cannot send PHI, PII, or trade secrets through a third-party cloud they do not control. This eliminates Zapier and Make from many serious enterprise procurement processes from the start. n8n, because it can be self-hosted on your own VPC, in your own region, behind your own firewall, is often the only platform that survives a CISO review.

Compliance data underscores the stakes. IBM's Cost of a Data Breach Report 2024 puts the average US data breach at 9.36 million USD, with healthcare averaging 9.77 million USD per incident (Source: IBM Cost of a Data Breach Report, 2024). HIPAA enforcement actions have risen 40 percent year-over-year through 2025 (Source: HHS Office for Civil Rights Annual Enforcement Summary, 2025). And NIST's 2025 AI Risk Management Framework guidance now explicitly recommends self-hosted automation for any workflow handling regulated data classifications (Source: NIST AI RMF 1.0 Implementation Guide, 2025).

For US healthcare, fintech, and government contractor use cases, the calculus is simple. If your AI workflow touches regulated data, n8n self-hosted is usually the only one of the three platforms that clears the security review.

Total cost of ownership beyond the subscription

Subscription pricing is only one line in the TCO equation. Engineering time to build, maintain, and migrate workflows often dwarfs platform fees. Teams that pick a tool primarily on sticker price tend to regret it within 12 to 18 months. A useful rule of thumb: budget 60 percent of year-one spend for platform fees and 40 percent for build and maintenance time. By year three on a stable stack, that flips. Self-hosted n8n at scale frequently lands at 5 to 15 percent of the equivalent Zapier Enterprise quote once you cross the 250,000 monthly execution threshold, even after counting infrastructure and DevOps time.

Decision Framework: Which Platform Fits Your 2026 Stack

Pick Zapier when

- Your team is non-technical and the workflows are simple (under 5 steps).

- Total monthly volume stays under 5,000 tasks.

- You need niche SaaS integrations (Zapier still leads at 7,000+ apps).

- Speed of building matters more than per-execution cost.

Pick Make when

- Workflows are visually complex but volume is moderate (5K to 100K operations).

- Your team prefers a visual canvas over code.

- Data residency is not a hard requirement.

- You want better pricing than Zapier without running infrastructure.

Pick n8n when

- You are building production AI automation with agents, retrieval, or multi-step LLM chains.

- Monthly execution volume exceeds 10,000 (and especially over 100,000).

- Data residency, HIPAA, SOC 2, or self-hosted control is required.

- You have engineering capacity to manage workflows as code.

- You want to avoid vendor lock-in (n8n workflows are JSON, fully exportable).

Where AI Automation Is Heading in 2026 and Beyond

Three trends shape platform selection over the next 18 months. First, agentic AI moves from prototype to production. McKinsey's 2025 State of AI report notes 78 percent of organizations now use AI in at least one business function, up from 55 percent the prior year, with agentic workflows the fastest-growing category (Source: McKinsey Global Survey on AI, 2025).

Second, regulatory pressure intensifies. The EU AI Act enforcement window opened in 2025 and US state level AI laws are multiplying. Third, model costs keep falling, but orchestration cost (the workflow platform itself) is becoming the dominant line item in AI operating budgets.

For a deeper view on what enterprise leaders should prepare for, NeuraMonks published a strategic outlook that pairs well with this comparison:

AI Automation in 2026: What Enterprise Leaders Must Prepare For

Free POC Scoping Call With NeuraMonks

Not sure which platform fits your AI roadmap? Book a free 30 minute POC scoping call with the NeuraMonks team. We will map your top three workflows to n8n, Zapier, or Make based on volume, compliance, and AI complexity, then give you a build estimate before you commit a dollar.