Insights and Inspiration

Explore Our Blog

Dive into our blog for expert insights, tips, and industry trends to elevate your project management journey.

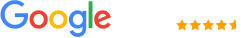

Live Safety Detection with AI Turning CCTV Cameras into Real Time OSHA Protection Systems

AI-powered safety detection is transforming construction sites by converting existing CCTV cameras into real-time hazard monitoring systems. Instead of reacting after incidents, contractors can now prevent violations before they happen. With computer vision, edge processing, and intelligent alerts, companies are reducing OSHA fines, improving worker safety, and gaining a competitive edge through data-driven compliance.

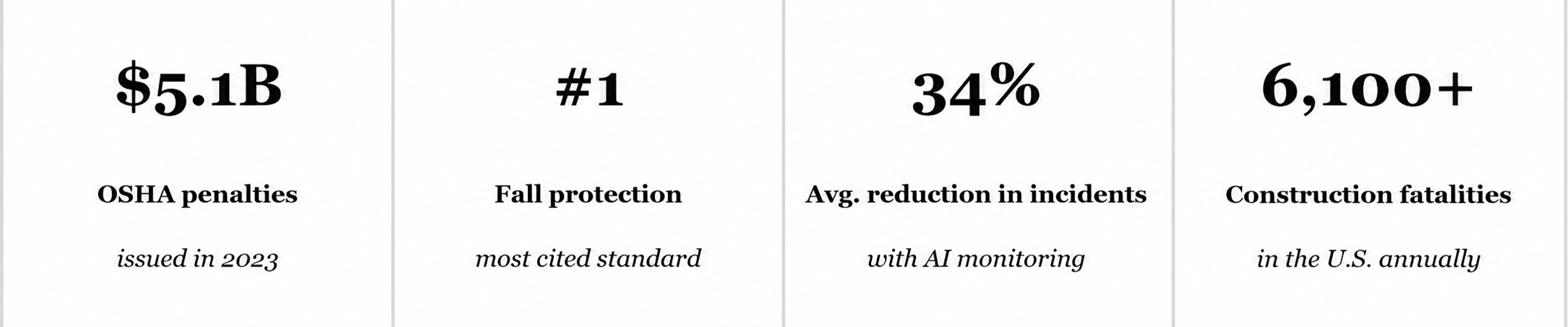

Every 99 minutes, a construction worker in the United States dies on the job. That is not a statistic buried in a government footnote — it is the OSHA reality for an industry generating over $2 trillion in annual output while consistently ranking among the nation's most dangerous sectors. The question construction safety officers, general contractors, and operations VPs are now asking is not whether technology can change this. They are asking how fast they can deploy it.

The answer lies in the cameras already mounted across your job site. Paired with AI in Construction, those passive surveillance feeds become active, intelligent safety systems that detect hazards in real time, alert supervisors before injuries occur, and generate audit-ready compliance records automatically.

The Gap Between CCTV Footage and Actual Safety

Traditional CCTV infrastructure was built for one purpose: recording. Footage sits on local servers, reviewed only after an incident has already occurred. A worker entering a restricted zone, a forklift operating without clear sightlines, a team member skipping PPE before a welding task — none of these trigger any alert in a conventional system. A human operator watching 12 simultaneous feeds will miss most violations simply due to attention limits.

This is the blind spot that Artificial Intelligence in Construction eliminates.

AI-powered safety detection layers a real-time analytical brain on top of your existing camera network. No new hardware required on most deployments. No ripping out infrastructure. The system watches every frame, on every feed, simultaneously — and it never blinks.

How AI in Construction Actually Works on a Job Site

The architecture behind a live safety detection system is more accessible than most construction technology leaders expect. It combines three core technologies into one integrated layer:

1. Computer Vision — The Eyes of the System

Computer vision is the foundational layer. Deep learning models trained on millions of construction-specific images learn to identify and classify objects, people, postures, and behaviors with high precision. The models can distinguish between a hard hat and an uncovered head, a forklift in motion versus stationary, a worker inside a geo-fenced exclusion zone versus standing at its edge.

What makes this different from generic object detection is domain specificity. Models trained on hospital interiors or retail environments perform poorly on construction sites with variable lighting, dust, partial occlusion, and fast-moving machinery. Construction-purpose-built computer vision models are trained and validated specifically for these conditions.

2. vLLM Model Integration — Context and Communication

A raw alert — "PPE violation detected, Camera 7" — has limited operational value unless it reaches the right person with enough context to act. This is where a vLLM-powered model layer adds significant intelligence. vLLM enables efficient, high-performance serving of large language models, allowing structured safety event data to be processed and transformed into contextual, human-readable alerts: which worker zone, what violation type, recommended immediate action, and escalation priority.

It can also synthesize shift-end safety summaries, flag repeated violation patterns, and surface proactive risk advisories for site supervisors—while ensuring faster response times and scalable deployment across multiple camera feeds.

3. Real-Time Edge Processing — Speed Without Latency

Safety alerts have zero value if they arrive 45 seconds after the hazard event. Modern AI safety systems process video at the edge — meaning on-site hardware or low-latency cloud nodes analyze frames in real time, triggering alerts within 2 to 8 seconds of a violation being detected. This is fast enough for a supervisor to intervene before an injury occurs.

Key Insight for Safety Officers

Most job sites already have 60–80% of the CCTV infrastructure required for AI safety deployment. The investment is in software, integration, and model fine-tuning — not wholesale hardware replacement.

See What Your Current Cameras Are Missing

Request a complimentary site safety gap analysis — we map your existing CCTV infrastructure against AI detection coverage potential with zero obligation.

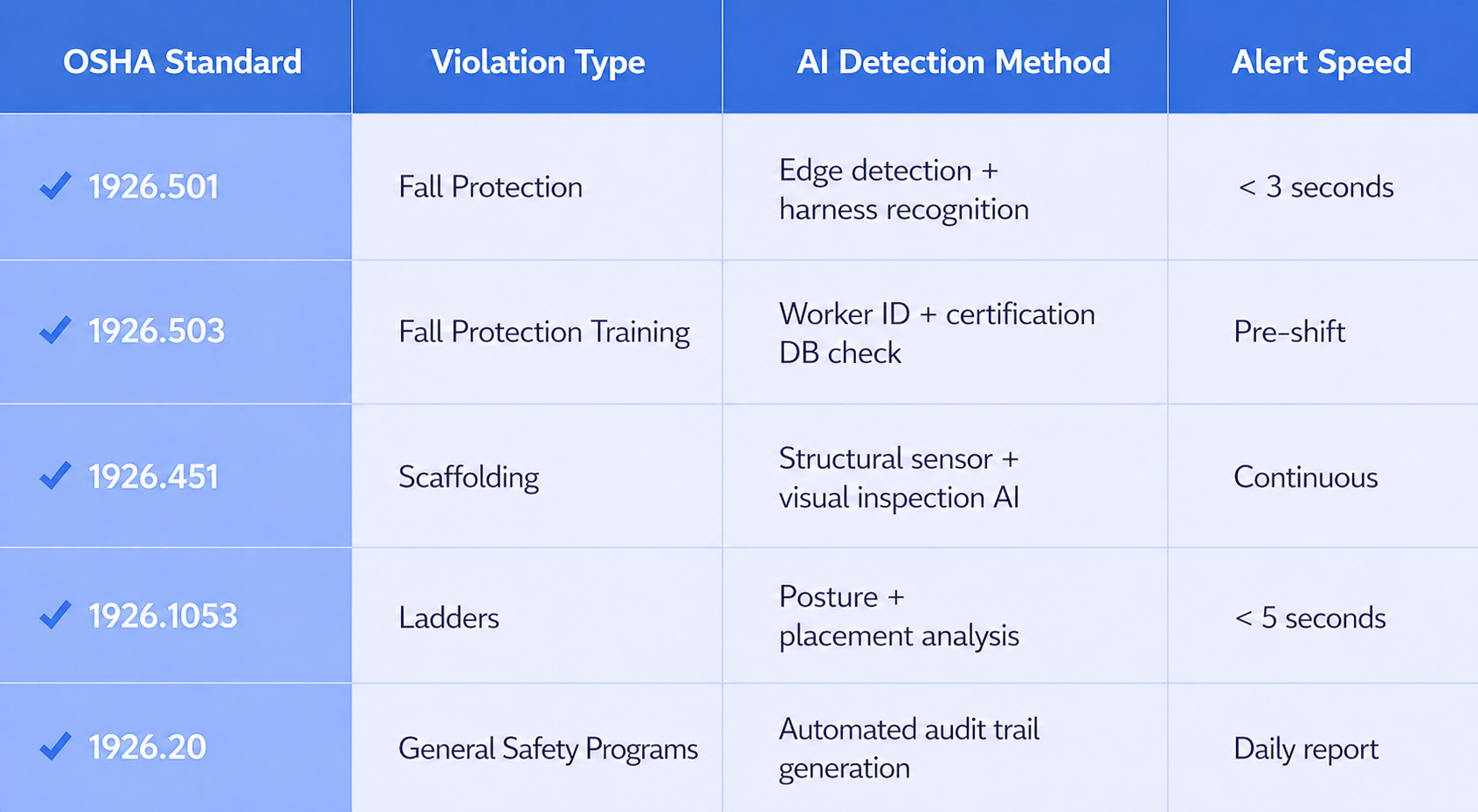

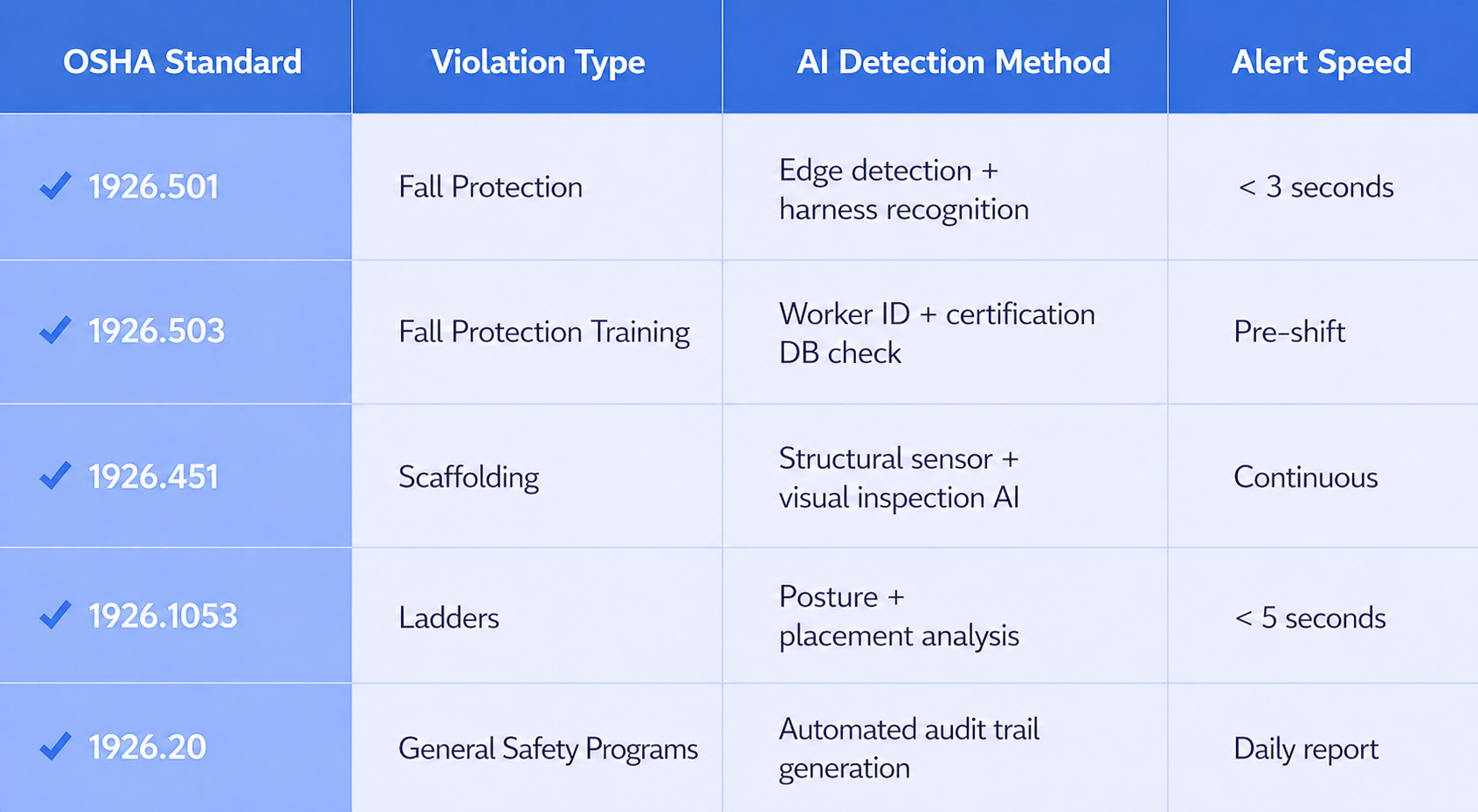

OSHA Violations AI Can Detect in Real Time

The following hazard categories are among the most consistently detectable by trained computer vision models deployed on construction sites across the United States:

- Hard hat, safety vest, and glove non-compliance across all personnel

- Unauthorized access to restricted or high-voltage zones

- Workers operating near unguarded edges, floor openings, or scaffolding without fall protection

- Forklift and heavy equipment proximity violations with pedestrians

- Lockout/tagout area breaches during maintenance activities

- Ladder safety violations, including improper angle, unsecured base, or overreaching

- Crowd density monitoring in confined spaces

- Smoke, fire, and unusual thermal signature detection

- Vehicle speed limit violations within site boundaries

- After-hours unauthorized personnel access

Each of these categories directly maps to OSHA's Top 10 Most Cited Standards — the violations responsible for the majority of construction fatalities and fines in the United States annually. Addressing them proactively, rather than reactively, is where AI in Construction delivers the highest measurable ROI.

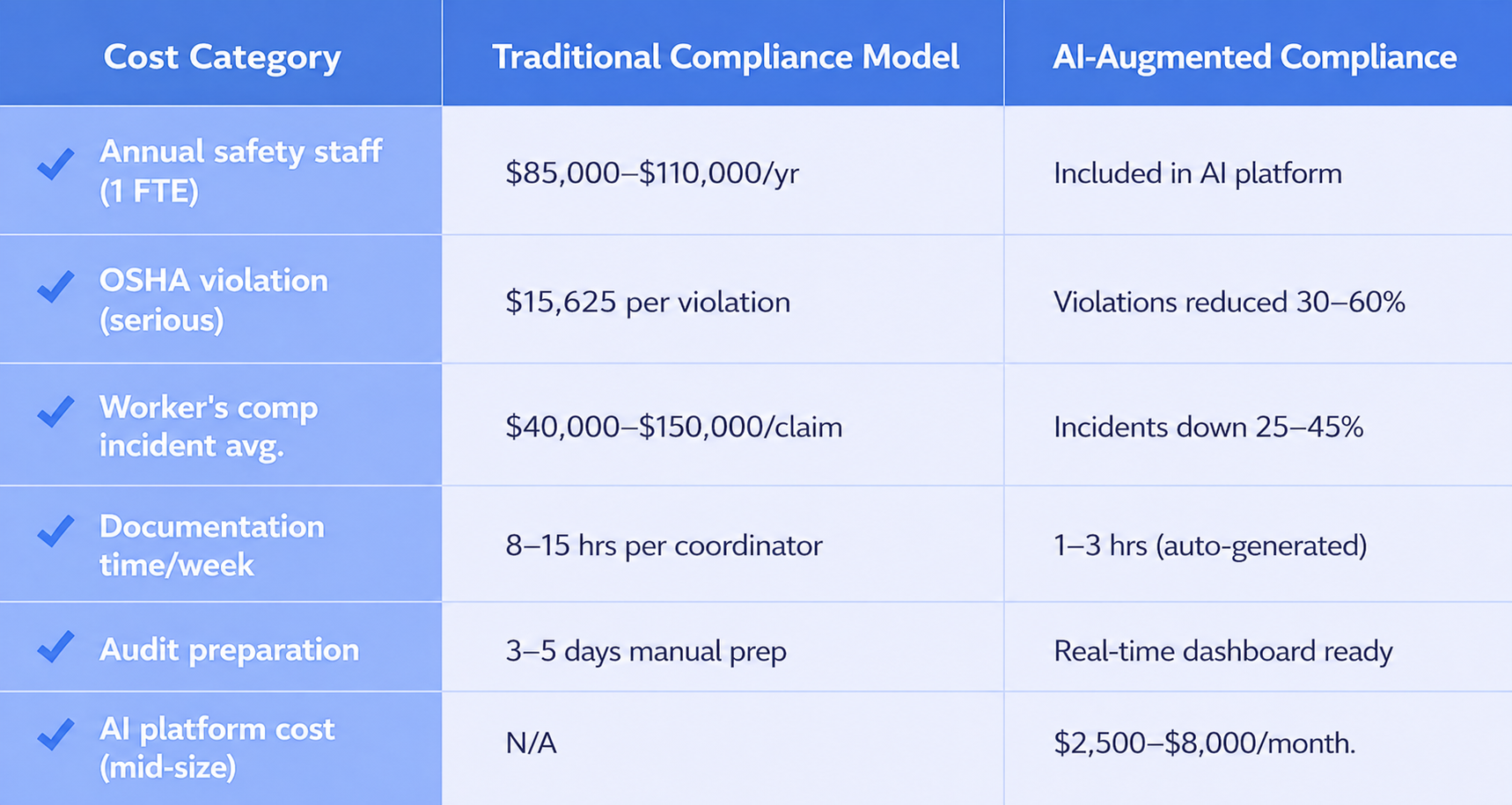

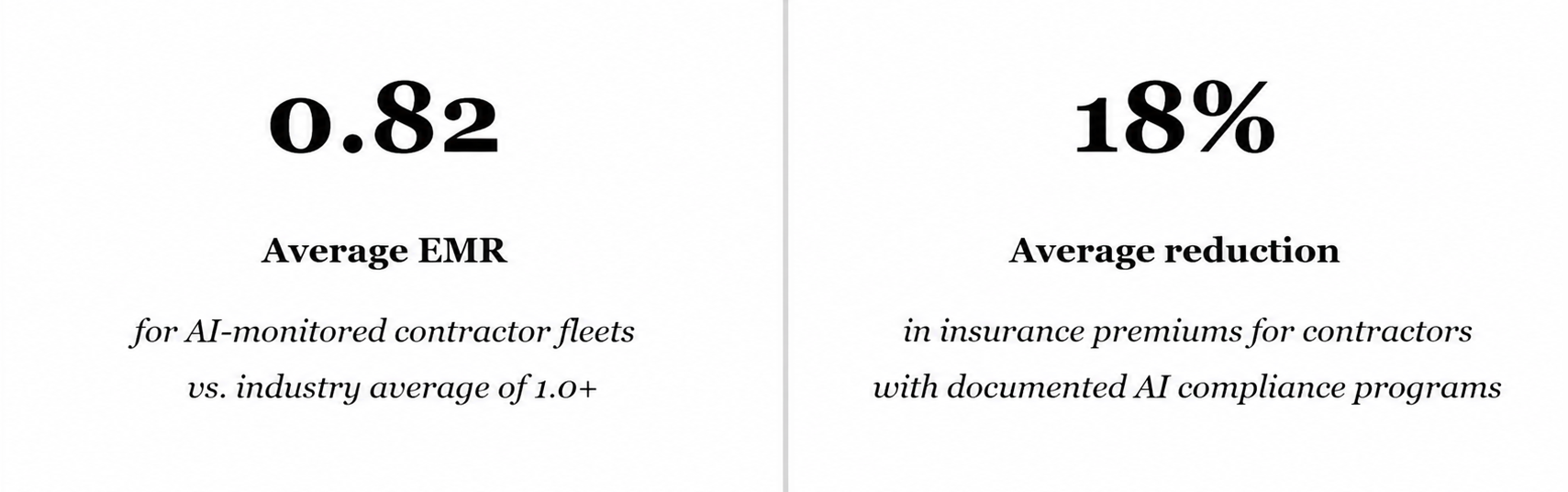

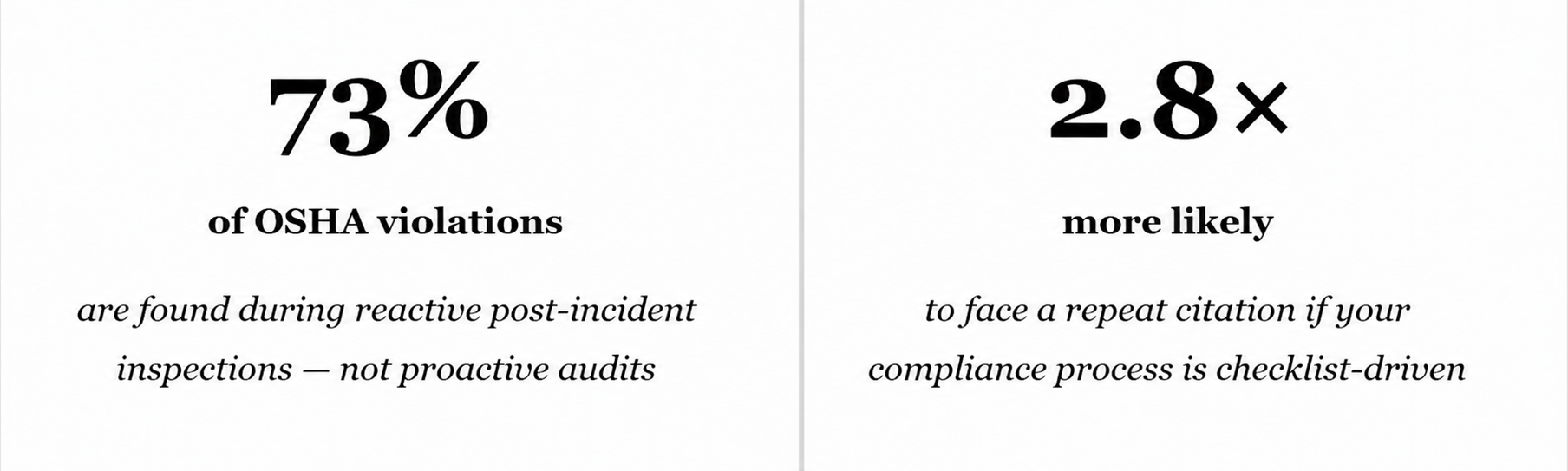

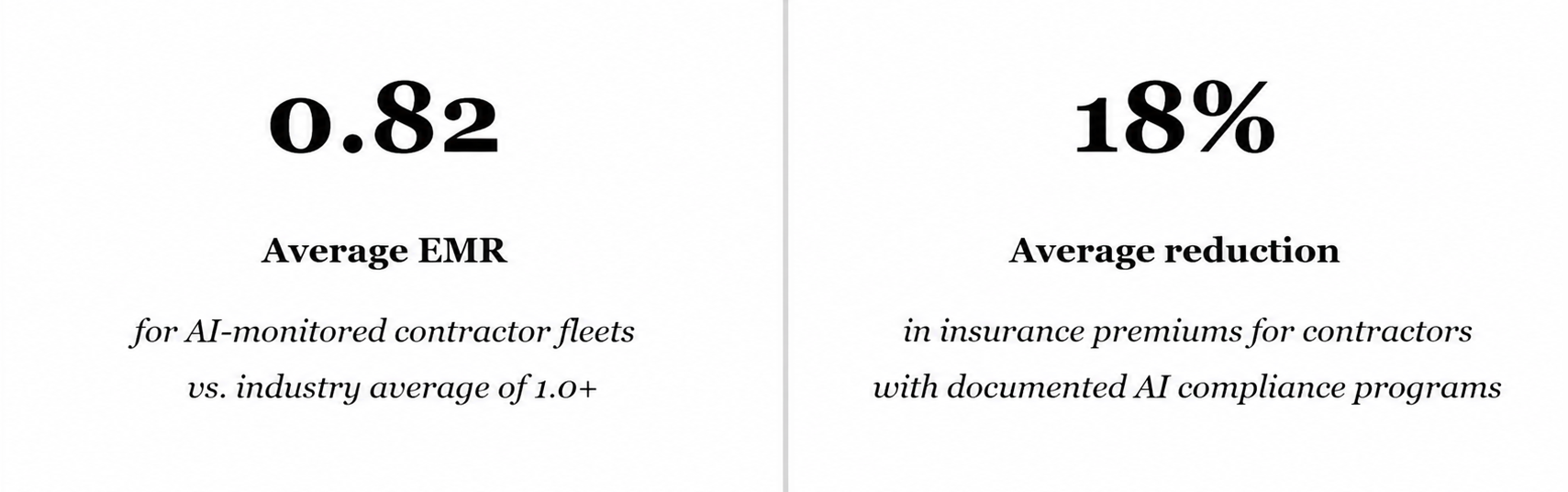

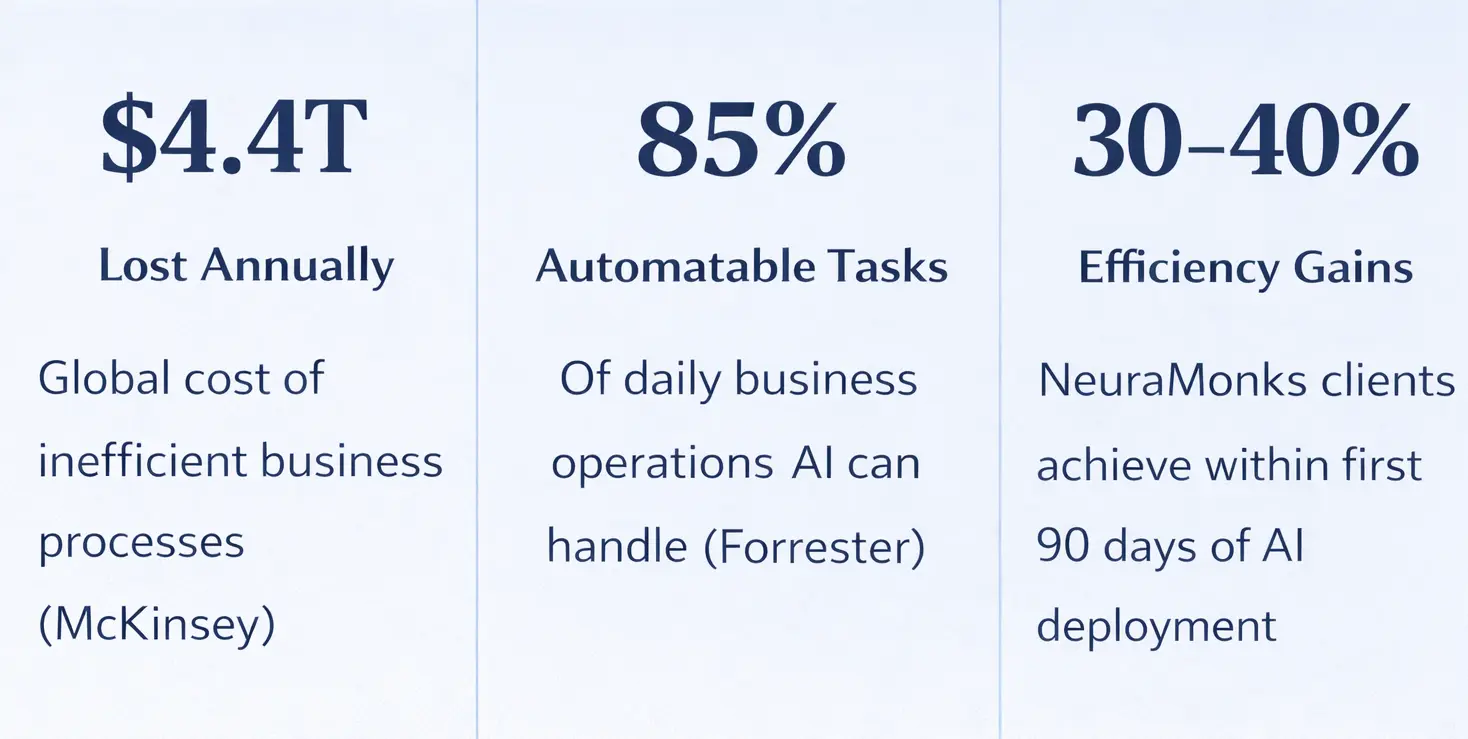

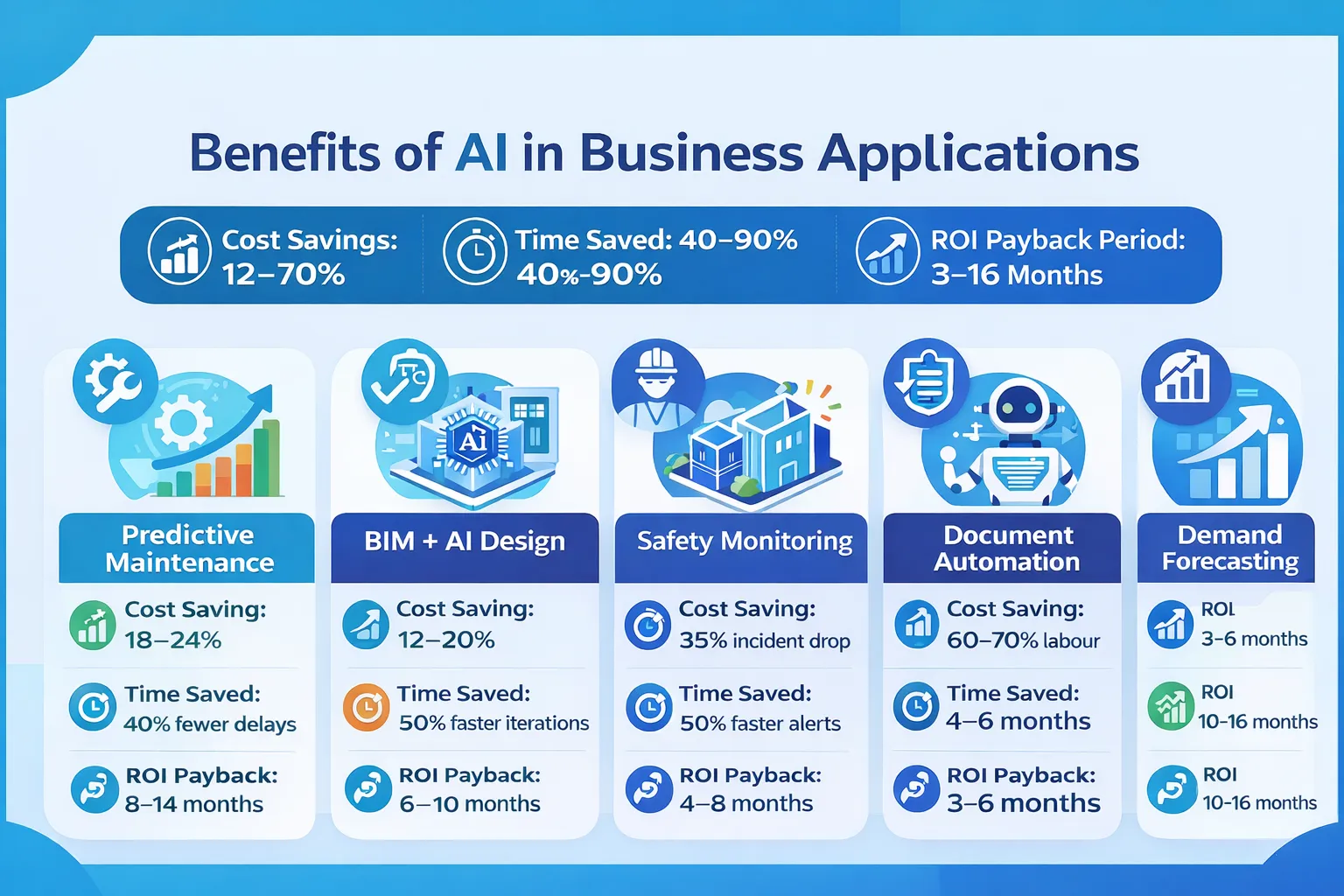

The Commercial Case: What ROI Looks Like for General Contractors

Safety technology decisions in construction are ultimately financial decisions. Here is how the numbers typically pencil out for mid-to-large general contractors operating in the US market:

One documented case from a 400-worker commercial construction project in Texas showed a 71% reduction in reportable incidents in the 18 months following AI safety system deployment. OSHA fines dropped from an annual exposure of approximately $280,000 to under $40,000. The system paid for itself in under seven months.

For Risk Managers and CFOs

Insurers are beginning to recognize AI-verified safety programs as quantifiable risk reduction. Several carriers now offer documented premium discounts of 5–15% for contractors who can demonstrate real-time safety monitoring with AI solutions. This changes the ROI math significantly.

What a Deployment Actually Looks Like: A 6-Phase Implementation

Construction technology leaders frequently overestimate the complexity of AI safety deployment. For a site with existing CCTV infrastructure, a full deployment follows a structured six-phase process:

- Phase 1 — Site Survey & Camera Audit: Map existing camera coverage, identify blind spots, assess feed quality and resolution for model performance.

- Phase 2 — Model Selection & Fine-Tuning: Select pre-trained construction safety models; fine-tune on site-specific conditions (lighting, machinery types, worker density patterns).

- Phase 3 — Integration & Edge Deployment: Connect AI processing nodes to existing CCTV streams via RTSP or API bridge. No camera replacement required in most cases.

- Phase 4 — Alert Workflow Configuration: Define escalation rules, notification channels (SMS, Slack, dashboard), and violation severity thresholds by camera zone.

- Phase 5 — Safety Team Training & Calibration: Onboard site supervisors to the dashboard; refine model confidence thresholds based on first 2–4 weeks of live data.

- Phase 6 — Compliance Reporting Automation: Configure OSHA-formatted daily and monthly safety reports with incident logs, violation trends, and corrective action tracking.

Total deployment time for a single construction site ranges from 3 to 8 weeks depending on site scale, integration complexity, and the number of camera feeds being processed. Multi-site enterprise rollouts typically run on a phased schedule of 90 to 180 days.

The Hidden Value: Safety Data as a Competitive Differentiator

The most forward-thinking contractors in the US market are not deploying AI safety detection purely to avoid fines. They are building a data asset.

Every violation logged, every pattern identified, every near-miss captured creates a structured safety intelligence database. Over 12 to 24 months, this data tells a story that manual incident logs simply cannot: which subcontractors consistently underperform on PPE compliance, which site zones carry disproportionate risk, which shift windows show elevated violation rates, and which supervision staffing models correlate with the safest outcomes.

This intelligence feeds directly into project bidding, subcontractor selection, insurance negotiations, and bonding conversations. Owners and developers increasingly require documented safety performance as part of the prequalification process for large projects. Contractors with AI-verified safety records arrive at those conversations with a quantified, defensible advantage.

What Leading Construction Firms Are Discovering

Safety score documentation from AI systems is becoming a procurement criterion on federal, commercial, and healthcare construction projects. Contractors who have this data are shortlisting more frequently. Those without it are being asked to explain why.

Map Your Safety Intelligence Potential

Our construction AI team analyzes your current CCTV coverage and produces a personalized Safety Detection Readiness Score — delivered within 5 business days at no cost.

When evaluating partners, construction safety leaders should assess the following:

- Model training data: Was the computer vision model trained specifically on construction environments, or on generic workplace footage?

- OSHA alignment: Does the system's violation taxonomy map directly to OSHA 29 CFR 1926 construction standards?

- Deployment track record: How many construction sites has the partner deployed on, and what documented safety improvement metrics are available?

- Integration depth: Can the platform connect to your existing safety management software, ERP, and reporting systems?

- Ongoing model improvement: Does the partner provide model updates as regulations change and new hazard patterns are identified?

The right partner delivers more than software — they deliver a working AI solutions ecosystem that improves demonstrably over time, producing sharper detection, fewer false positives, and richer safety intelligence with every month of operation.

Your Job Site Is Already Equipped. It Just Needs Intelligence. The cameras are there. The hazards are there. The regulatory exposure is there.

What is missing is the layer that connects them — and transforms surveillance footage into a living, breathing OSHA protection system.

Bring Your Construction Safety into 2026

NeuraMonks engineers construction-grade AI safety systems built for OSHA compliance, US job sites, and zero-tolerance incident environments.

Claim Your Free Safety Detection Scoping Session

Every 99 minutes, a construction worker in the United States dies on the job. That is not a statistic buried in a government footnote — it is the OSHA reality for an industry generating over $2 trillion in annual output while consistently ranking among the nation's most dangerous sectors. The question construction safety officers, general contractors, and operations VPs are now asking is not whether technology can change this. They are asking how fast they can deploy it.

The answer lies in the cameras already mounted across your job site. Paired with AI in Construction, those passive surveillance feeds become active, intelligent safety systems that detect hazards in real time, alert supervisors before injuries occur, and generate audit-ready compliance records automatically.

The Gap Between CCTV Footage and Actual Safety

Traditional CCTV infrastructure was built for one purpose: recording. Footage sits on local servers, reviewed only after an incident has already occurred. A worker entering a restricted zone, a forklift operating without clear sightlines, a team member skipping PPE before a welding task — none of these trigger any alert in a conventional system. A human operator watching 12 simultaneous feeds will miss most violations simply due to attention limits.

This is the blind spot that Artificial Intelligence in Construction eliminates.

AI-powered safety detection layers a real-time analytical brain on top of your existing camera network. No new hardware required on most deployments. No ripping out infrastructure. The system watches every frame, on every feed, simultaneously — and it never blinks.

How AI in Construction Actually Works on a Job Site

The architecture behind a live safety detection system is more accessible than most construction technology leaders expect. It combines three core technologies into one integrated layer:

1. Computer Vision — The Eyes of the System

Computer vision is the foundational layer. Deep learning models trained on millions of construction-specific images learn to identify and classify objects, people, postures, and behaviors with high precision. The models can distinguish between a hard hat and an uncovered head, a forklift in motion versus stationary, a worker inside a geo-fenced exclusion zone versus standing at its edge.

What makes this different from generic object detection is domain specificity. Models trained on hospital interiors or retail environments perform poorly on construction sites with variable lighting, dust, partial occlusion, and fast-moving machinery. Construction-purpose-built computer vision models are trained and validated specifically for these conditions.

2. vLLM Model Integration — Context and Communication

A raw alert — "PPE violation detected, Camera 7" — has limited operational value unless it reaches the right person with enough context to act. This is where a vLLM-powered model layer adds significant intelligence. vLLM enables efficient, high-performance serving of large language models, allowing structured safety event data to be processed and transformed into contextual, human-readable alerts: which worker zone, what violation type, recommended immediate action, and escalation priority.

It can also synthesize shift-end safety summaries, flag repeated violation patterns, and surface proactive risk advisories for site supervisors—while ensuring faster response times and scalable deployment across multiple camera feeds.

3. Real-Time Edge Processing — Speed Without Latency

Safety alerts have zero value if they arrive 45 seconds after the hazard event. Modern AI safety systems process video at the edge — meaning on-site hardware or low-latency cloud nodes analyze frames in real time, triggering alerts within 2 to 8 seconds of a violation being detected. This is fast enough for a supervisor to intervene before an injury occurs.

Key Insight for Safety Officers

Most job sites already have 60–80% of the CCTV infrastructure required for AI safety deployment. The investment is in software, integration, and model fine-tuning — not wholesale hardware replacement.

See What Your Current Cameras Are Missing

Request a complimentary site safety gap analysis — we map your existing CCTV infrastructure against AI detection coverage potential with zero obligation.

OSHA Violations AI Can Detect in Real Time

The following hazard categories are among the most consistently detectable by trained computer vision models deployed on construction sites across the United States:

- Hard hat, safety vest, and glove non-compliance across all personnel

- Unauthorized access to restricted or high-voltage zones

- Workers operating near unguarded edges, floor openings, or scaffolding without fall protection

- Forklift and heavy equipment proximity violations with pedestrians

- Lockout/tagout area breaches during maintenance activities

- Ladder safety violations, including improper angle, unsecured base, or overreaching

- Crowd density monitoring in confined spaces

- Smoke, fire, and unusual thermal signature detection

- Vehicle speed limit violations within site boundaries

- After-hours unauthorized personnel access

Each of these categories directly maps to OSHA's Top 10 Most Cited Standards — the violations responsible for the majority of construction fatalities and fines in the United States annually. Addressing them proactively, rather than reactively, is where AI in Construction delivers the highest measurable ROI.

The Commercial Case: What ROI Looks Like for General Contractors

Safety technology decisions in construction are ultimately financial decisions. Here is how the numbers typically pencil out for mid-to-large general contractors operating in the US market:

One documented case from a 400-worker commercial construction project in Texas showed a 71% reduction in reportable incidents in the 18 months following AI safety system deployment. OSHA fines dropped from an annual exposure of approximately $280,000 to under $40,000. The system paid for itself in under seven months.

For Risk Managers and CFOs

Insurers are beginning to recognize AI-verified safety programs as quantifiable risk reduction. Several carriers now offer documented premium discounts of 5–15% for contractors who can demonstrate real-time safety monitoring with AI solutions. This changes the ROI math significantly.

What a Deployment Actually Looks Like: A 6-Phase Implementation

Construction technology leaders frequently overestimate the complexity of AI safety deployment. For a site with existing CCTV infrastructure, a full deployment follows a structured six-phase process:

- Phase 1 — Site Survey & Camera Audit: Map existing camera coverage, identify blind spots, assess feed quality and resolution for model performance.

- Phase 2 — Model Selection & Fine-Tuning: Select pre-trained construction safety models; fine-tune on site-specific conditions (lighting, machinery types, worker density patterns).

- Phase 3 — Integration & Edge Deployment: Connect AI processing nodes to existing CCTV streams via RTSP or API bridge. No camera replacement required in most cases.

- Phase 4 — Alert Workflow Configuration: Define escalation rules, notification channels (SMS, Slack, dashboard), and violation severity thresholds by camera zone.

- Phase 5 — Safety Team Training & Calibration: Onboard site supervisors to the dashboard; refine model confidence thresholds based on first 2–4 weeks of live data.

- Phase 6 — Compliance Reporting Automation: Configure OSHA-formatted daily and monthly safety reports with incident logs, violation trends, and corrective action tracking.

Total deployment time for a single construction site ranges from 3 to 8 weeks depending on site scale, integration complexity, and the number of camera feeds being processed. Multi-site enterprise rollouts typically run on a phased schedule of 90 to 180 days.

The Hidden Value: Safety Data as a Competitive Differentiator

The most forward-thinking contractors in the US market are not deploying AI safety detection purely to avoid fines. They are building a data asset.

Every violation logged, every pattern identified, every near-miss captured creates a structured safety intelligence database. Over 12 to 24 months, this data tells a story that manual incident logs simply cannot: which subcontractors consistently underperform on PPE compliance, which site zones carry disproportionate risk, which shift windows show elevated violation rates, and which supervision staffing models correlate with the safest outcomes.

This intelligence feeds directly into project bidding, subcontractor selection, insurance negotiations, and bonding conversations. Owners and developers increasingly require documented safety performance as part of the prequalification process for large projects. Contractors with AI-verified safety records arrive at those conversations with a quantified, defensible advantage.

What Leading Construction Firms Are Discovering

Safety score documentation from AI systems is becoming a procurement criterion on federal, commercial, and healthcare construction projects. Contractors who have this data are shortlisting more frequently. Those without it are being asked to explain why.

Map Your Safety Intelligence Potential

Our construction AI team analyzes your current CCTV coverage and produces a personalized Safety Detection Readiness Score — delivered within 5 business days at no cost.

When evaluating partners, construction safety leaders should assess the following:

- Model training data: Was the computer vision model trained specifically on construction environments, or on generic workplace footage?

- OSHA alignment: Does the system's violation taxonomy map directly to OSHA 29 CFR 1926 construction standards?

- Deployment track record: How many construction sites has the partner deployed on, and what documented safety improvement metrics are available?

- Integration depth: Can the platform connect to your existing safety management software, ERP, and reporting systems?

- Ongoing model improvement: Does the partner provide model updates as regulations change and new hazard patterns are identified?

The right partner delivers more than software — they deliver a working AI solutions ecosystem that improves demonstrably over time, producing sharper detection, fewer false positives, and richer safety intelligence with every month of operation.

Your Job Site Is Already Equipped. It Just Needs Intelligence. The cameras are there. The hazards are there. The regulatory exposure is there.

What is missing is the layer that connects them — and transforms surveillance footage into a living, breathing OSHA protection system.

Bring Your Construction Safety into 2026

NeuraMonks engineers construction-grade AI safety systems built for OSHA compliance, US job sites, and zero-tolerance incident environments.

Claim Your Free Safety Detection Scoping Session

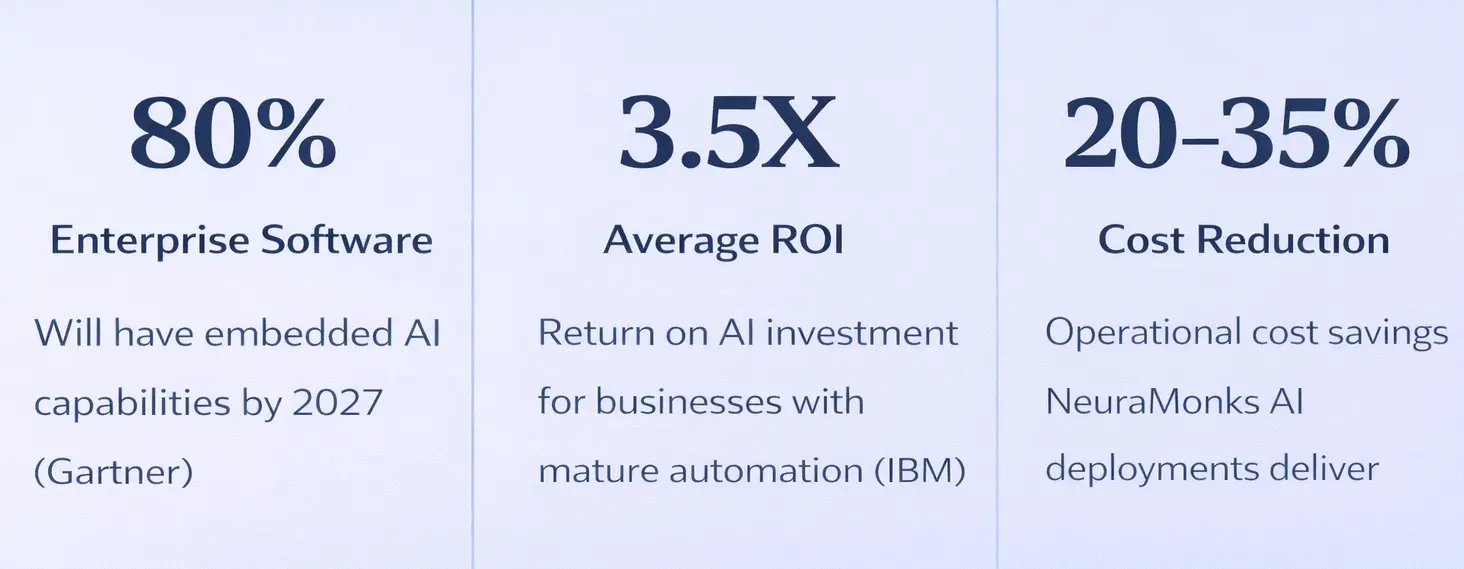

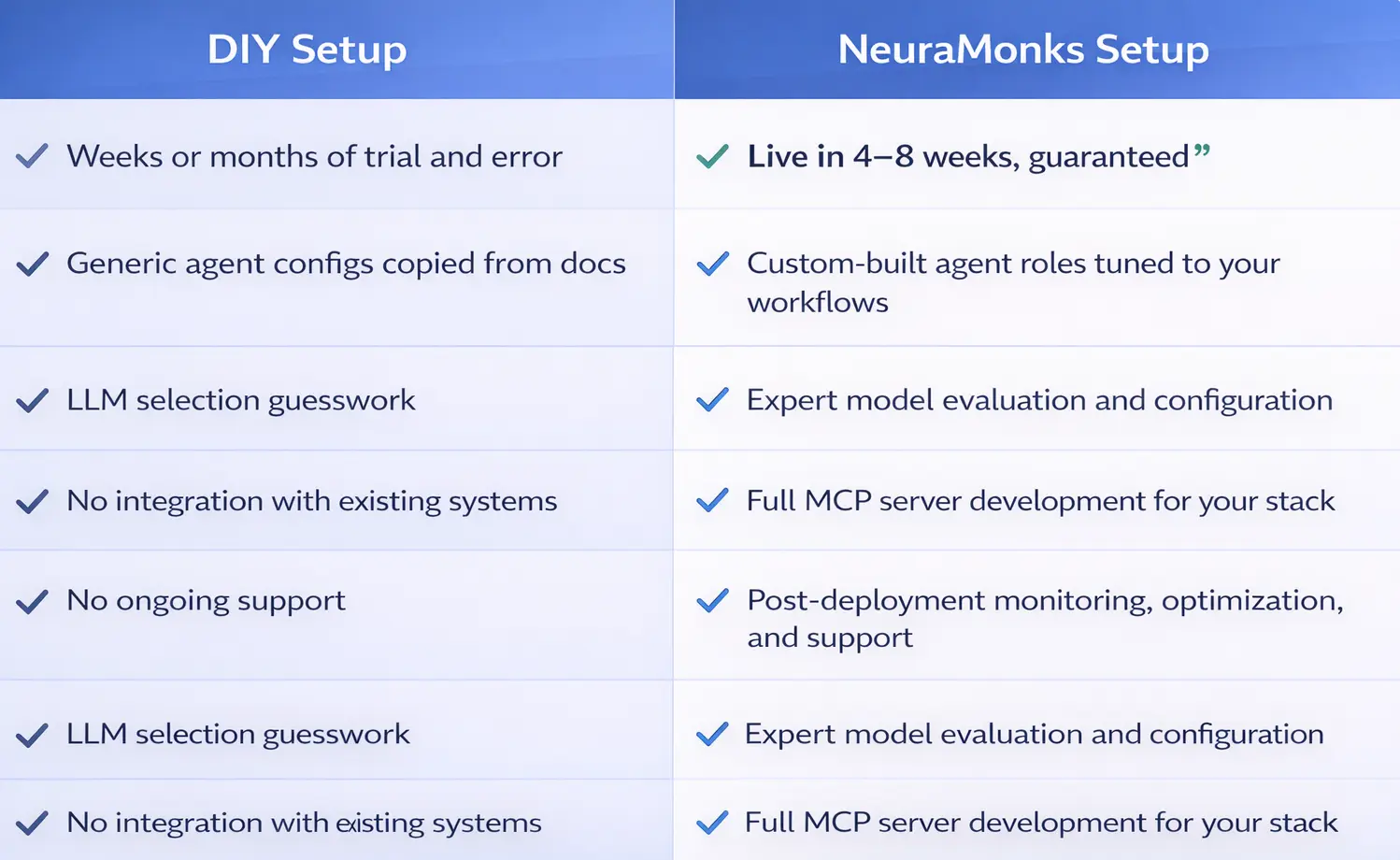

Build vs Partner: The Real Cost of Adding an AI Team in 2026

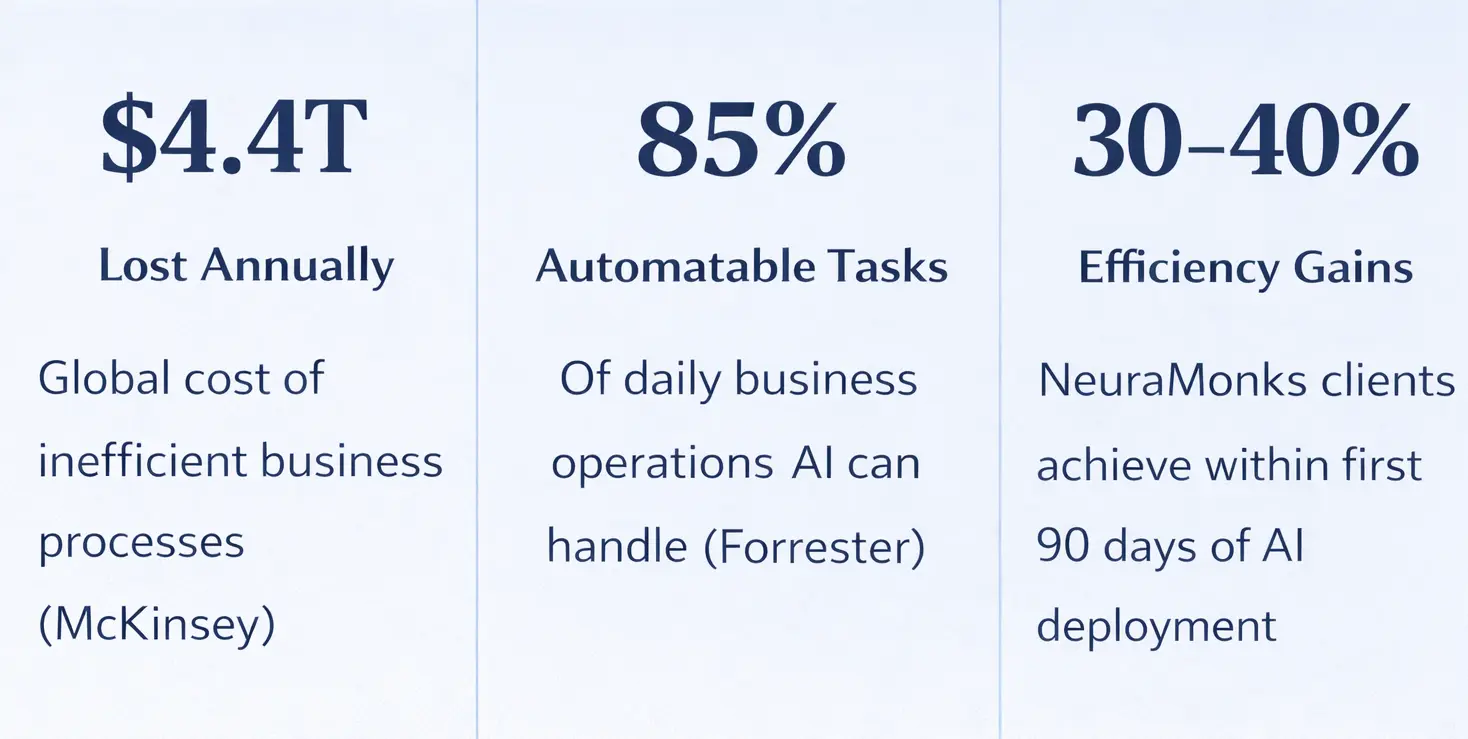

Building an in-house AI team in 2026 costs far more than most leaders budget for — salaries, recruiting, compute, and attrition push year-one totals past $700K. This post breaks down the full cost comparison between building internally vs. partnering with an AI firm like NeuraMonks, with numbers by vertical.

Let's skip the fluff.

If your company is seriously considering building out an AI capability right now, you've probably already done some back-of-napkin math. You've looked at a few LinkedIn profiles. Maybe you've talked to a recruiter. And somewhere in that process, the number got scary fast.

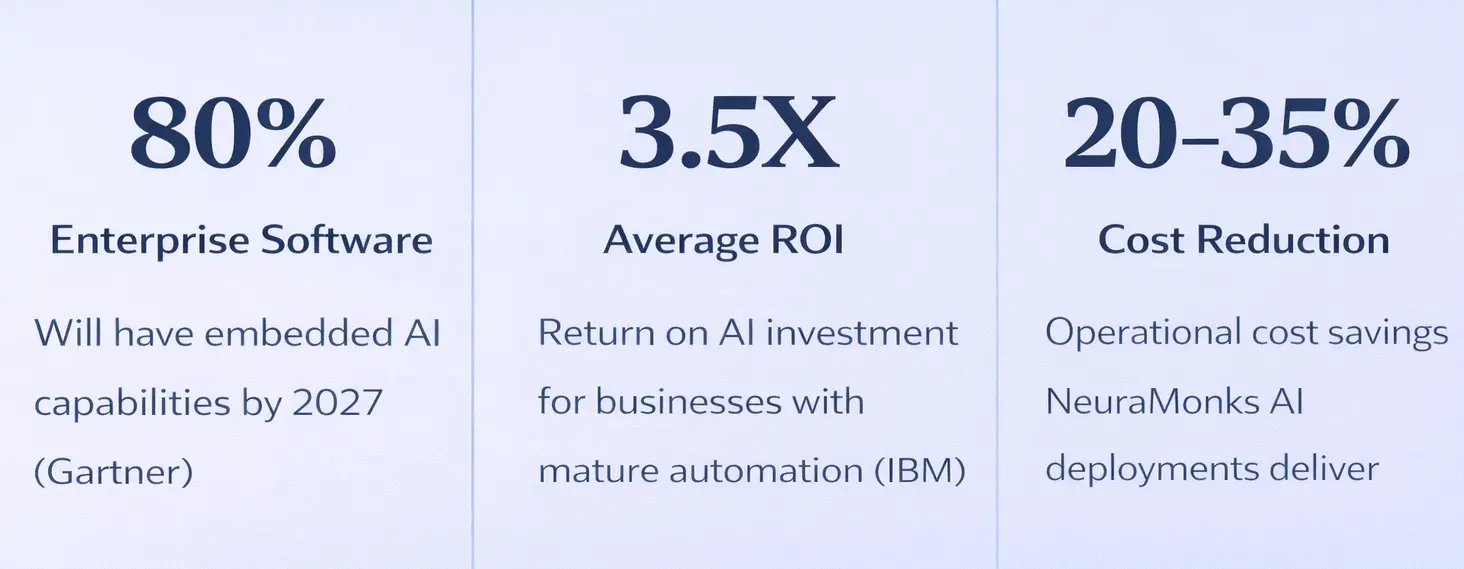

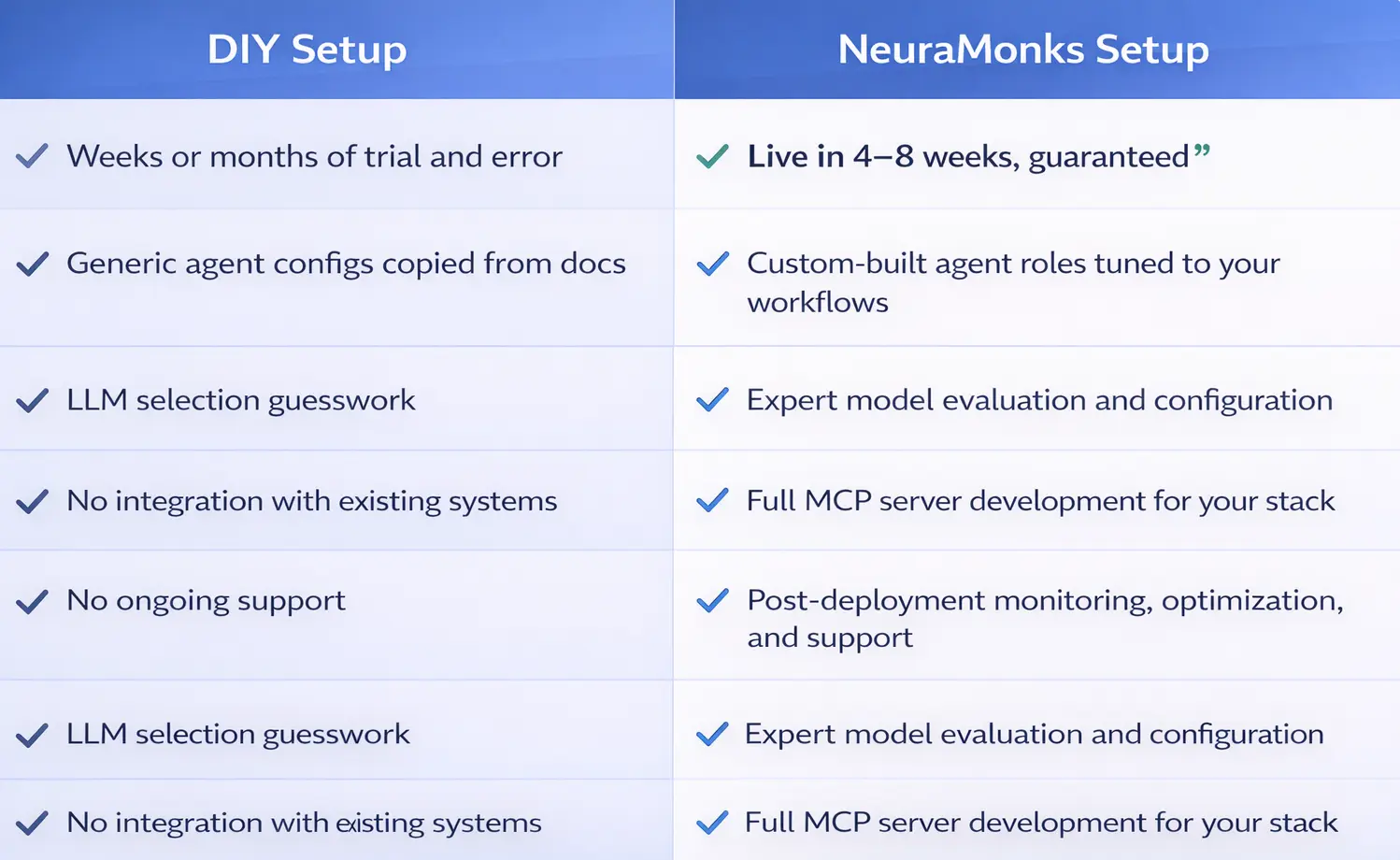

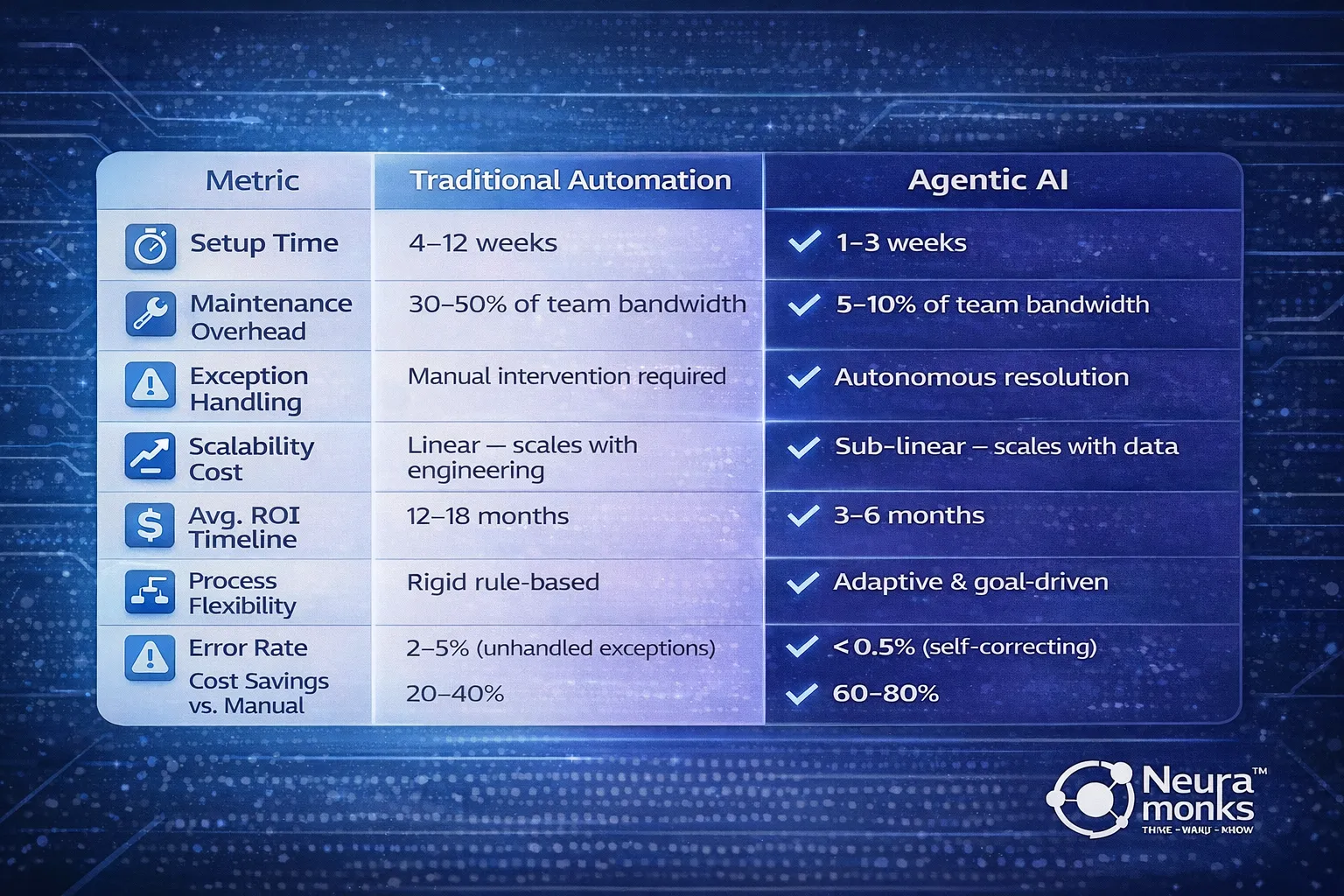

This post breaks down exactly why the "build it in-house" path costs between $500,000 and $700,000 in year one alone — and what the alternative actually looks like when you run the same numbers.

Why companies are getting this decision wrong right now

The AI hiring market in 2026 isn't the same as it was two years ago. Demand for machine learning engineers, AI architects, and LLM model specialists has outpaced supply significantly. Companies that started hiring in 2023 are still struggling to retain the people they brought on.

There's also a less-discussed problem: the skills you hire for today may not be the skills your product needs in 18 months. The field moves fast. An in-house team built around one architecture or framework can become a liability the moment the tooling shifts.

None of this means building in-house is wrong. It means you need to run the numbers before you commit.

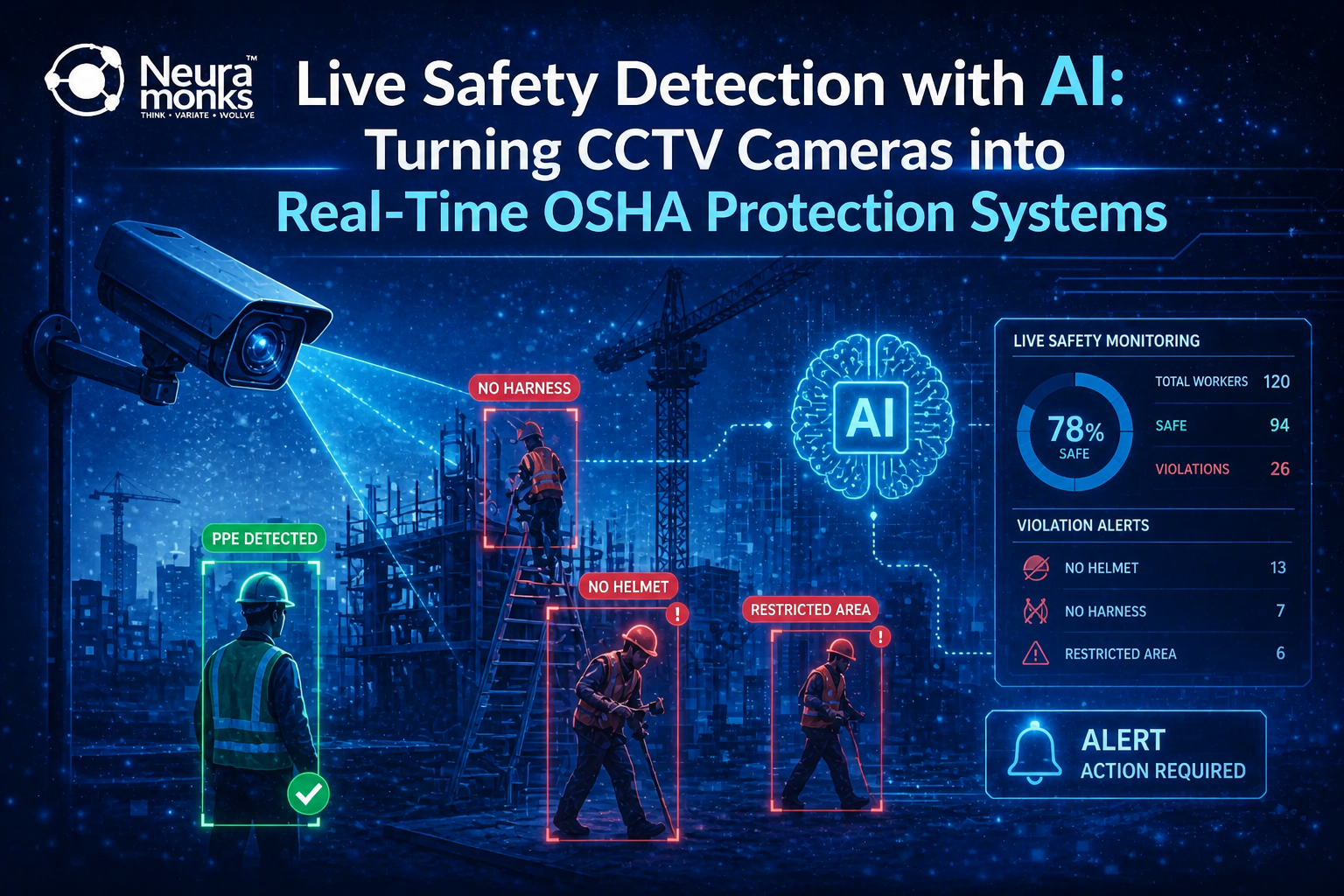

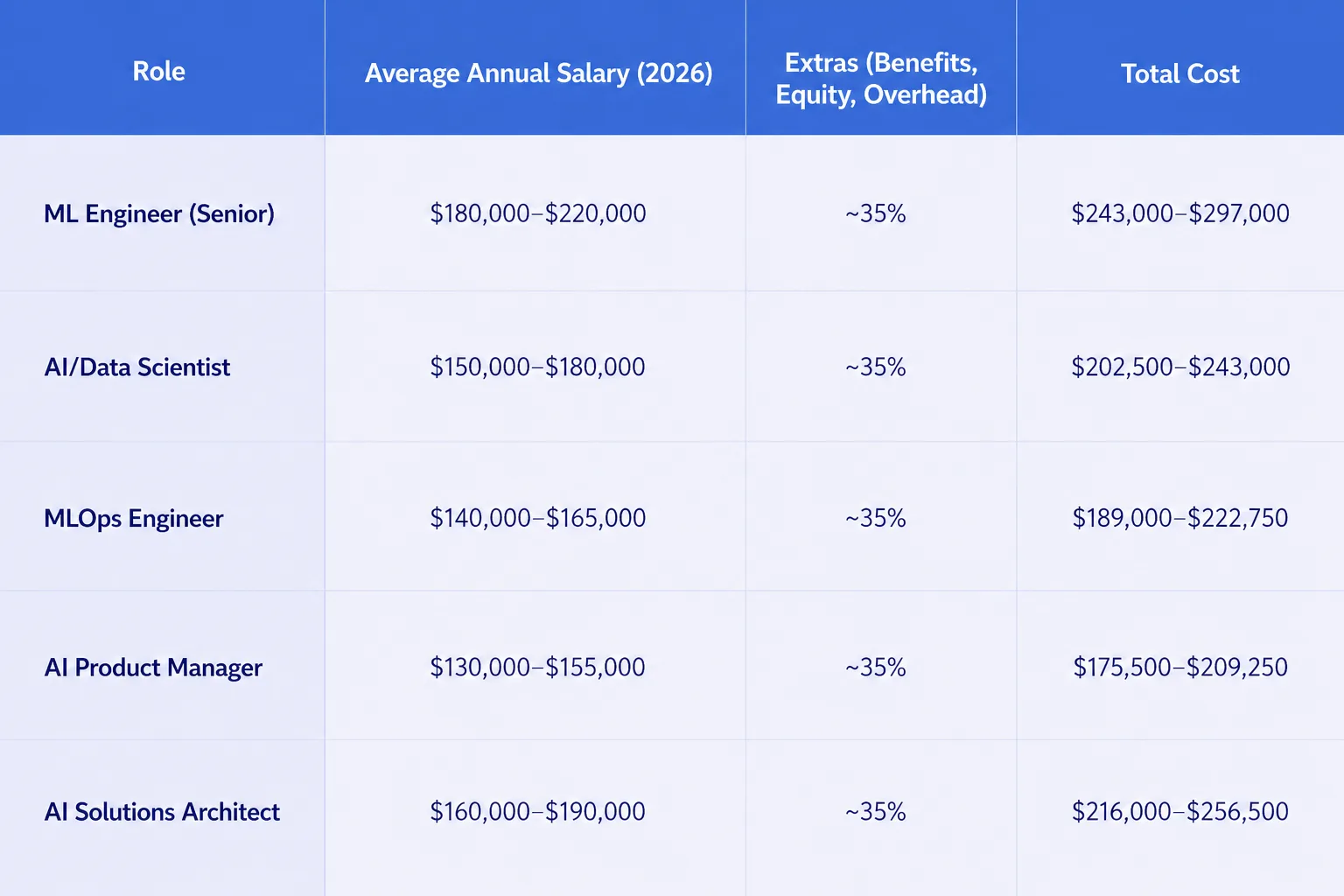

What it actually costs to build an in-house AI team

Here's where most budget conversations go sideways — leaders compare a partner's annual retainer to a single engineer's salary, not to the full cost of the team you'd need to get comparable output.

A functional AI development team that can take a product from prototype to production typically requires at least 4–5 people.

Add recruiting costs ($25,000–$40,000 per senior hire), onboarding time (typically 3–4 months before meaningful output), tooling licenses, compute infrastructure, and management overhead — and a conservative estimate for year one lands between $520,000 and $700,000.

That's before you ship a single model to production.

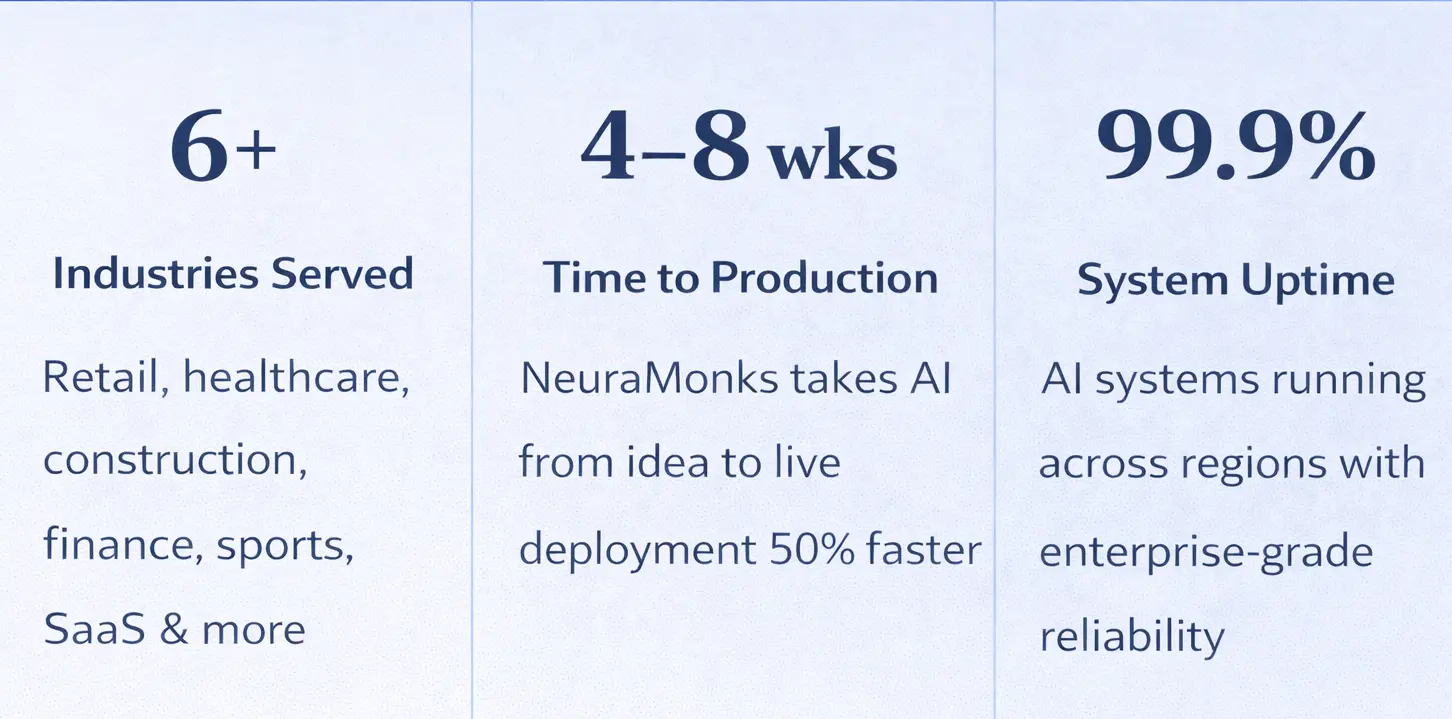

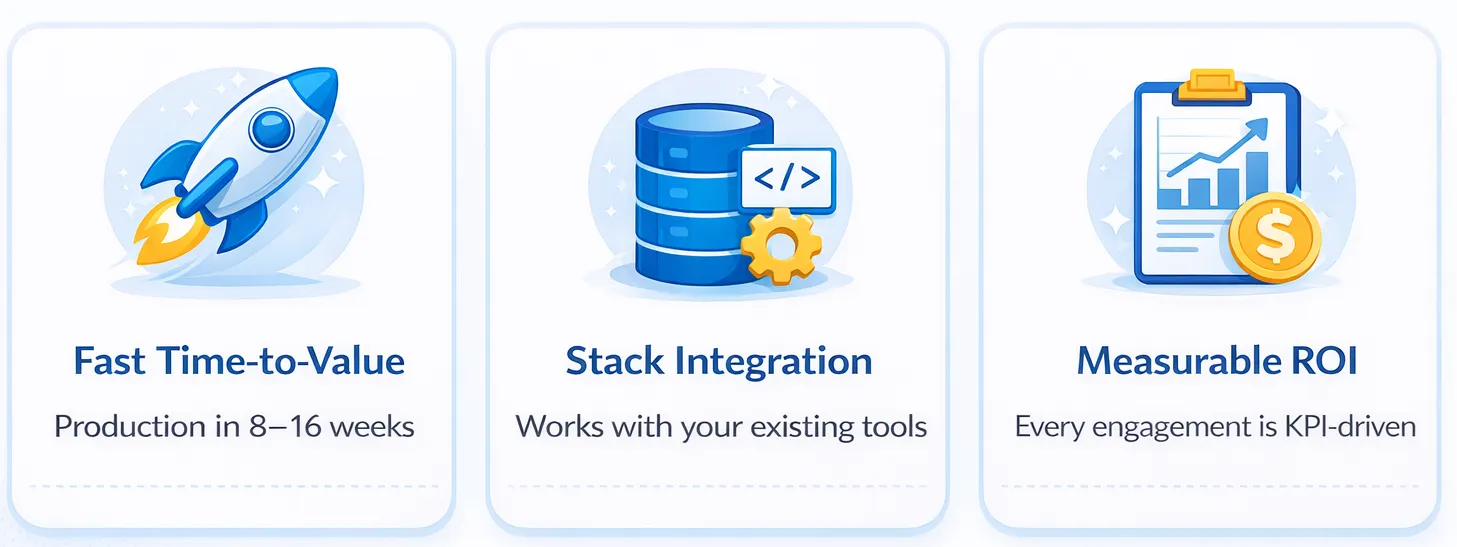

What partnering with NeuraMonks looks like by comparison

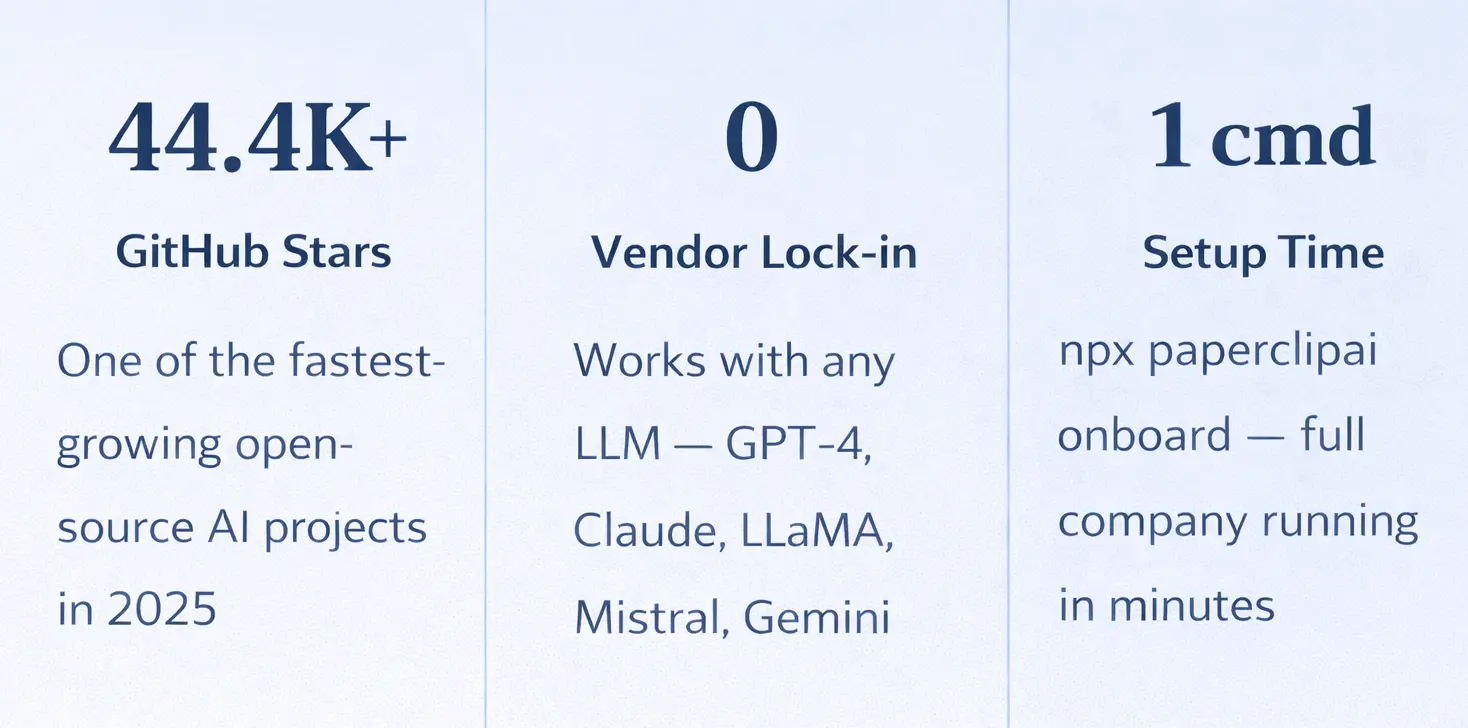

NeuraMonks works with companies that need production-grade AI solutions without the overhead of a full internal team. The engagement model is built around delivery, not headcount.

A typical mid-scope engagement — covering architecture, build, deployment, and ongoing iteration — runs between $150,000 and $180,000 annually. That's the full cost. No equity dilution, no benefits overhead, no 3-month ramp period while someone gets up to speed on your codebase.

The gap is significant: companies that partner rather than build typically see 60–70% lower first-year costs for comparable AI capability output.

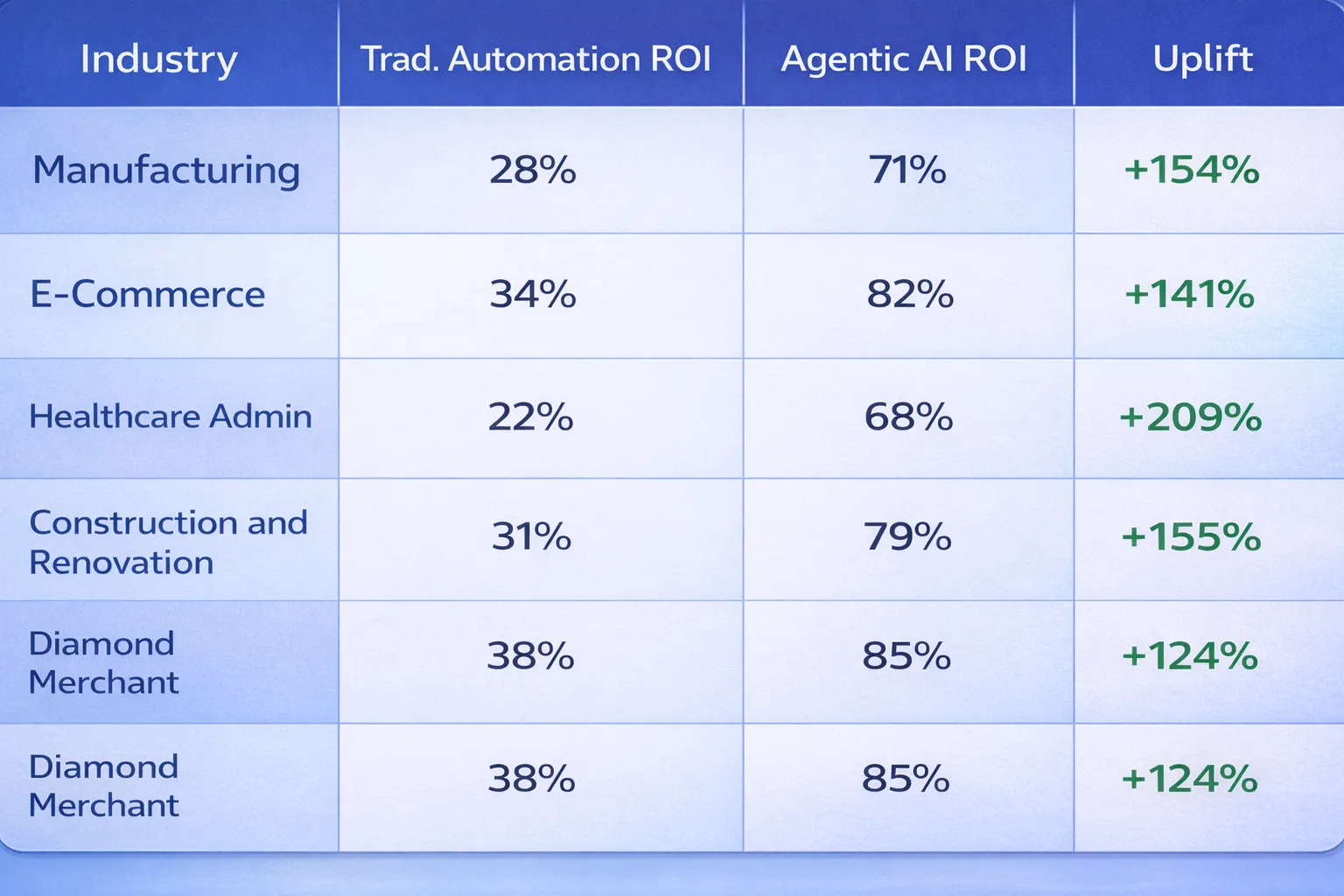

The numbers by vertical

Not every company has the same risk profile or timeline. Here's how the build-vs-partner calculation looks across three common ICPs.

Construction

In-house requirements in construction are higher than most sectors. Project complexity around site safety compliance, equipment tracking, budget forecasting, and real-time coordination means you're not just hiring for AI capability — you're hiring for domain expertise in construction workflows too. A construction AI team built to handle on-site safety protocols and project management integration routinely pushes past $650,000 in year one.

The faster path: partner with a team that has already built construction-specific AI automation pipelines and can integrate directly with your project management systems and site operations from day one.

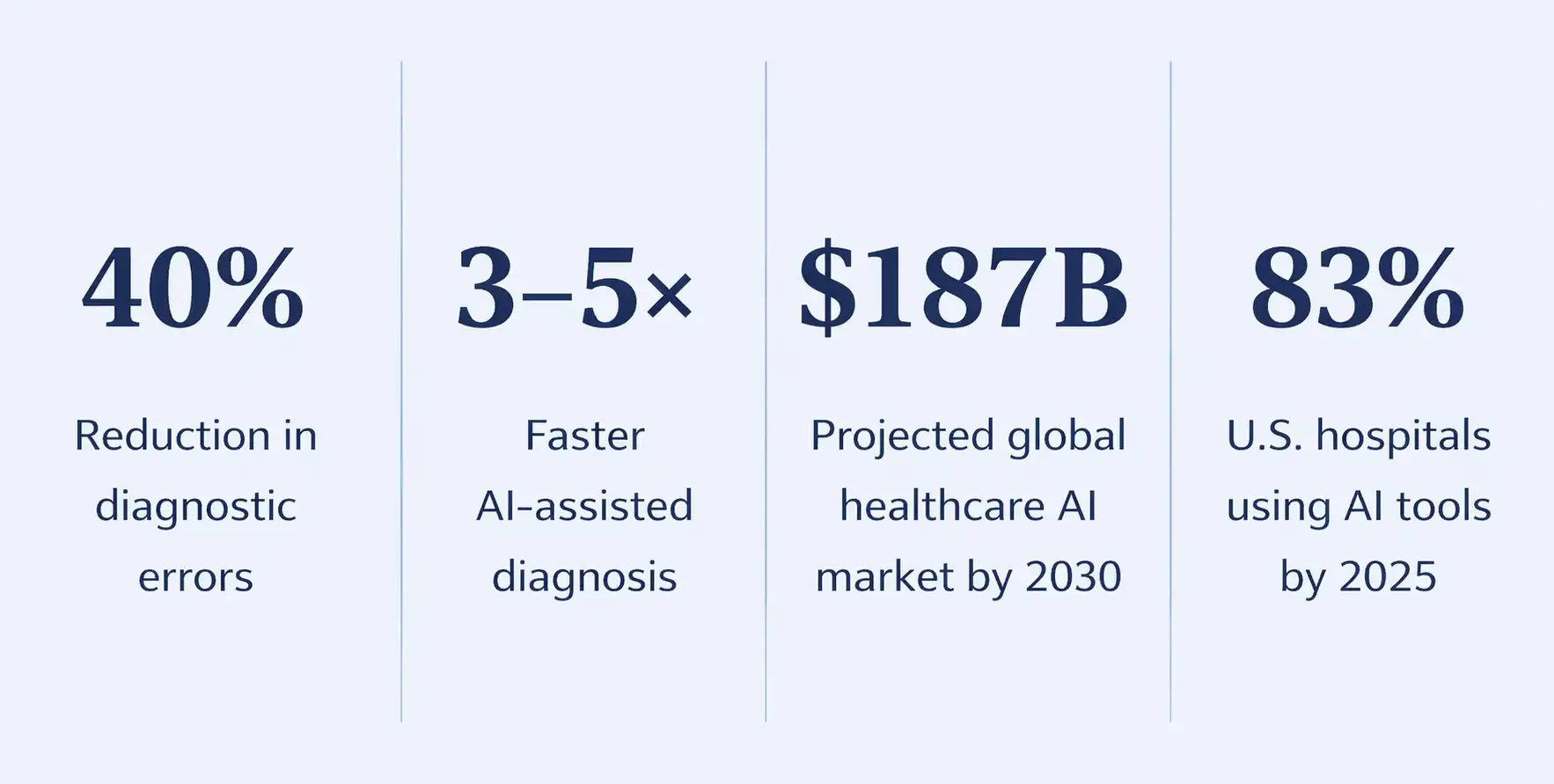

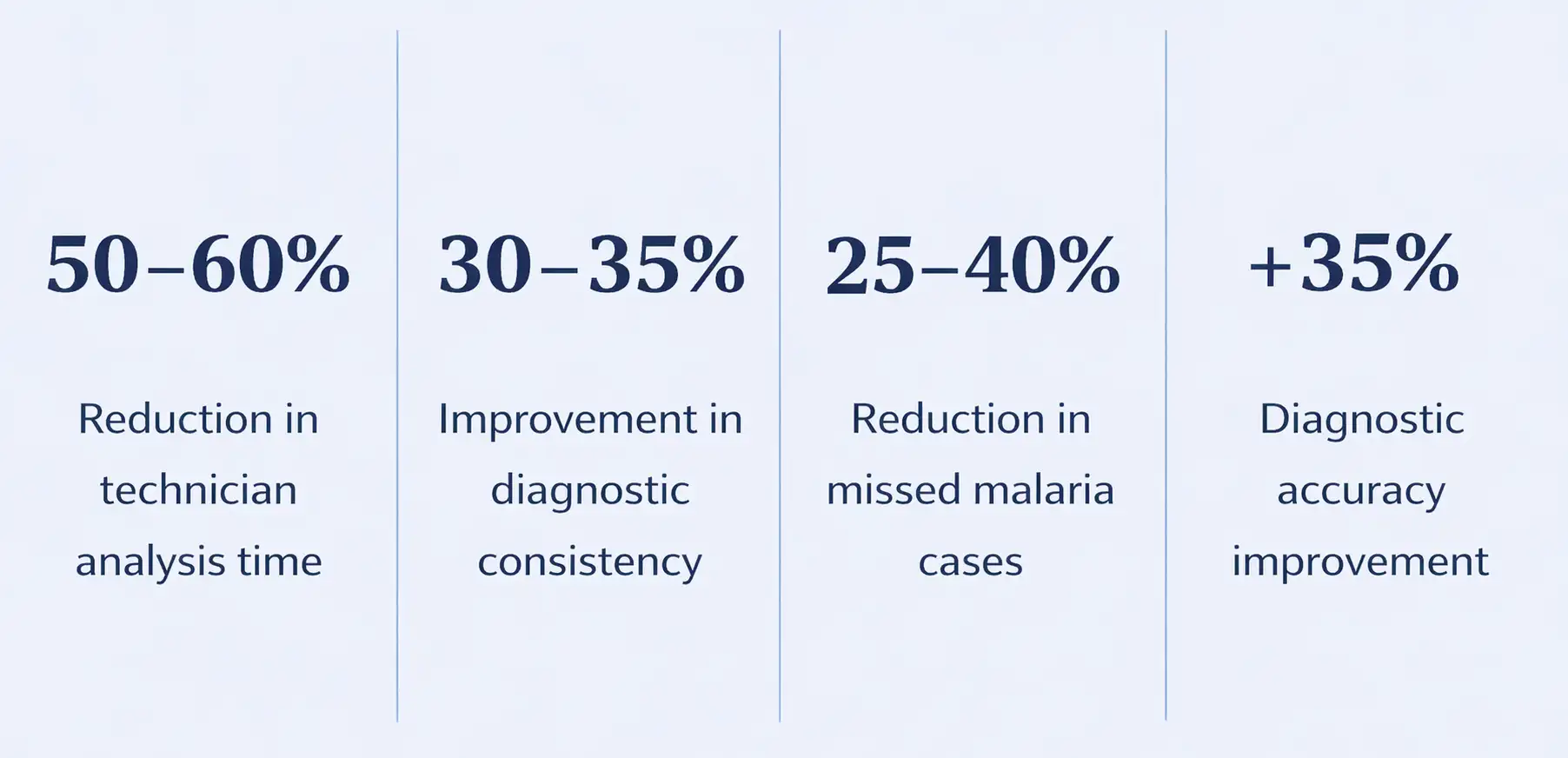

Healthcare and health tech

Healthcare in AI runs into similar compliance walls — HIPAA, FDA guidance on software as a medical device, and the general caution that comes with patient data. Building internal expertise across clinical AI and compliance typically requires 12–18 months before a team is genuinely productive.

For health tech companies, the time cost is often more damaging than the budget cost. The window to ship competitive AI features is narrow.

SaaS and product companies

SaaS companies face a different pressure: speed. Product roadmaps move quarterly, and a hiring cycle that takes 5–6 months to fill three key roles means you're a year behind before the team is functional.

SaaS companies that work with an ai development company typically ship AI-powered features 3–4x faster than teams building the function from scratch.

The hidden costs nobody puts in the deck

Salary comparisons miss a lot. Here are the line items that tend to surprise finance teams mid-year:

Compute and infrastructure: LLM model training and inference at production scale isn't cheap. AWS, GCP, or Azure bills for a team running real workloads regularly exceed $8,000–$15,000/month. In a partnership model, those costs are shared or bundled.

Tooling and licensing: Enterprise licenses for model monitoring, data labeling platforms, and vector database infrastructure add up. Expect $30,000–$60,000 annually for a mid-size team.

Attrition: Senior AI engineers are highly mobile. The average tenure for ML engineers at non-tech companies is under 2 years. Replacing a senior hire costs roughly 50–75% of their annual salary in recruiting, onboarding, and lost productivity.

Management overhead: Someone has to manage this team. If that's a CTO or VP of Engineering who's already stretched, the opportunity cost rarely shows up on a budget line — but it's real.

When building in-house actually makes sense

This isn't a one-size answer. There are cases where hiring internal AI talent is the right call.

If your core product is the AI — meaning the model is your IP and your competitive moat — you probably need to own that capability over time. Companies like this should plan for a 2–3 year build with heavy early investment.

If your data is so sensitive that it genuinely cannot leave your infrastructure under any circumstances, a fully internal team may be required despite the cost.

And if you're a large enterprise with runway and a clear 5-year AI roadmap, building internal centers of excellence makes strategic sense.

For everyone else — mid-size companies, fast-growth startups, product teams trying to ship AI features in the next 6 months — the math favors partnership.

What NeuraMonks actually builds

We have delivered AI solutions across NLP, computer vision, recommendation systems, and document intelligence. The team includes specialists in ai solutions architecture, model fine-tuning, and production deployment — not generalist developers who've read a few papers.

Projects typically include an initial discovery sprint (2–3 weeks), a prototype phase (4–6 weeks), and a production deployment phase with SLAs. The engagement doesn't end at launch — we maintains and iterates on deployed models as your data and use case evolves.

Stop doing the math wrong

The real question isn't "can we afford to hire AI talent?" It's "what does it cost us not to ship AI capability in the next 12 months?"

For most companies, the answer to that second question is market share, customer churn, or a product roadmap that looks outdated by the time it ships.

Ready to see what a scoped engagement actually looks like for your product?

Book a 30-minute conversation with the NeuraMonks team. No pitch deck, no sales cycle — just a straight conversation about whether partnership makes sense for where you are.

Start with the right model for your stage. Transform your AI roadmap from a budget problem into a shipping plan.

Let's skip the fluff.

If your company is seriously considering building out an AI capability right now, you've probably already done some back-of-napkin math. You've looked at a few LinkedIn profiles. Maybe you've talked to a recruiter. And somewhere in that process, the number got scary fast.

This post breaks down exactly why the "build it in-house" path costs between $500,000 and $700,000 in year one alone — and what the alternative actually looks like when you run the same numbers.

Why companies are getting this decision wrong right now

The AI hiring market in 2026 isn't the same as it was two years ago. Demand for machine learning engineers, AI architects, and LLM model specialists has outpaced supply significantly. Companies that started hiring in 2023 are still struggling to retain the people they brought on.

There's also a less-discussed problem: the skills you hire for today may not be the skills your product needs in 18 months. The field moves fast. An in-house team built around one architecture or framework can become a liability the moment the tooling shifts.

None of this means building in-house is wrong. It means you need to run the numbers before you commit.

What it actually costs to build an in-house AI team

Here's where most budget conversations go sideways — leaders compare a partner's annual retainer to a single engineer's salary, not to the full cost of the team you'd need to get comparable output.

A functional AI development team that can take a product from prototype to production typically requires at least 4–5 people.

Add recruiting costs ($25,000–$40,000 per senior hire), onboarding time (typically 3–4 months before meaningful output), tooling licenses, compute infrastructure, and management overhead — and a conservative estimate for year one lands between $520,000 and $700,000.

That's before you ship a single model to production.

What partnering with NeuraMonks looks like by comparison

NeuraMonks works with companies that need production-grade AI solutions without the overhead of a full internal team. The engagement model is built around delivery, not headcount.

A typical mid-scope engagement — covering architecture, build, deployment, and ongoing iteration — runs between $150,000 and $180,000 annually. That's the full cost. No equity dilution, no benefits overhead, no 3-month ramp period while someone gets up to speed on your codebase.

The gap is significant: companies that partner rather than build typically see 60–70% lower first-year costs for comparable AI capability output.

The numbers by vertical

Not every company has the same risk profile or timeline. Here's how the build-vs-partner calculation looks across three common ICPs.

Construction

In-house requirements in construction are higher than most sectors. Project complexity around site safety compliance, equipment tracking, budget forecasting, and real-time coordination means you're not just hiring for AI capability — you're hiring for domain expertise in construction workflows too. A construction AI team built to handle on-site safety protocols and project management integration routinely pushes past $650,000 in year one.

The faster path: partner with a team that has already built construction-specific AI automation pipelines and can integrate directly with your project management systems and site operations from day one.

Healthcare and health tech

Healthcare in AI runs into similar compliance walls — HIPAA, FDA guidance on software as a medical device, and the general caution that comes with patient data. Building internal expertise across clinical AI and compliance typically requires 12–18 months before a team is genuinely productive.

For health tech companies, the time cost is often more damaging than the budget cost. The window to ship competitive AI features is narrow.

SaaS and product companies

SaaS companies face a different pressure: speed. Product roadmaps move quarterly, and a hiring cycle that takes 5–6 months to fill three key roles means you're a year behind before the team is functional.

SaaS companies that work with an ai development company typically ship AI-powered features 3–4x faster than teams building the function from scratch.

The hidden costs nobody puts in the deck

Salary comparisons miss a lot. Here are the line items that tend to surprise finance teams mid-year:

Compute and infrastructure: LLM model training and inference at production scale isn't cheap. AWS, GCP, or Azure bills for a team running real workloads regularly exceed $8,000–$15,000/month. In a partnership model, those costs are shared or bundled.

Tooling and licensing: Enterprise licenses for model monitoring, data labeling platforms, and vector database infrastructure add up. Expect $30,000–$60,000 annually for a mid-size team.

Attrition: Senior AI engineers are highly mobile. The average tenure for ML engineers at non-tech companies is under 2 years. Replacing a senior hire costs roughly 50–75% of their annual salary in recruiting, onboarding, and lost productivity.

Management overhead: Someone has to manage this team. If that's a CTO or VP of Engineering who's already stretched, the opportunity cost rarely shows up on a budget line — but it's real.

When building in-house actually makes sense

This isn't a one-size answer. There are cases where hiring internal AI talent is the right call.

If your core product is the AI — meaning the model is your IP and your competitive moat — you probably need to own that capability over time. Companies like this should plan for a 2–3 year build with heavy early investment.

If your data is so sensitive that it genuinely cannot leave your infrastructure under any circumstances, a fully internal team may be required despite the cost.

And if you're a large enterprise with runway and a clear 5-year AI roadmap, building internal centers of excellence makes strategic sense.

For everyone else — mid-size companies, fast-growth startups, product teams trying to ship AI features in the next 6 months — the math favors partnership.

What NeuraMonks actually builds

We have delivered AI solutions across NLP, computer vision, recommendation systems, and document intelligence. The team includes specialists in ai solutions architecture, model fine-tuning, and production deployment — not generalist developers who've read a few papers.

Projects typically include an initial discovery sprint (2–3 weeks), a prototype phase (4–6 weeks), and a production deployment phase with SLAs. The engagement doesn't end at launch — we maintains and iterates on deployed models as your data and use case evolves.

Stop doing the math wrong

The real question isn't "can we afford to hire AI talent?" It's "what does it cost us not to ship AI capability in the next 12 months?"

For most companies, the answer to that second question is market share, customer churn, or a product roadmap that looks outdated by the time it ships.

Ready to see what a scoped engagement actually looks like for your product?

Book a 30-minute conversation with the NeuraMonks team. No pitch deck, no sales cycle — just a straight conversation about whether partnership makes sense for where you are.

Start with the right model for your stage. Transform your AI roadmap from a budget problem into a shipping plan.

OSHA Doesn't Inspect Your Safety Culture They Inspect Your Paperwork

Two compelling hooks covering documentation failure statistics and the 5 critical systems OSHA officers inspect.

Is Yours Ready?

Every construction site in America lives under a single compliance reality: OSHA doesn't walk your jobsite looking for the safety culture you've spent years building. They walk in looking for your paperwork — your SDS logs, your incident records, your training certifications, your hazard communication files. If those documents are missing, incomplete, or disorganized, the fine lands on your desk. Not on your safety philosophy.

For mid-to-large construction firms operating across multiple sites in states like Texas, California, Florida, and New York, the documentation burden is not a back-office problem. It is a frontline business risk. And in 2025, the companies that are passing OSHA inspections with zero citations are not the ones with the most dedicated safety managers — they are the ones that have automated the paper trail with AI in Construction systems that never miss a record, never lose a file, and never forget a deadline.

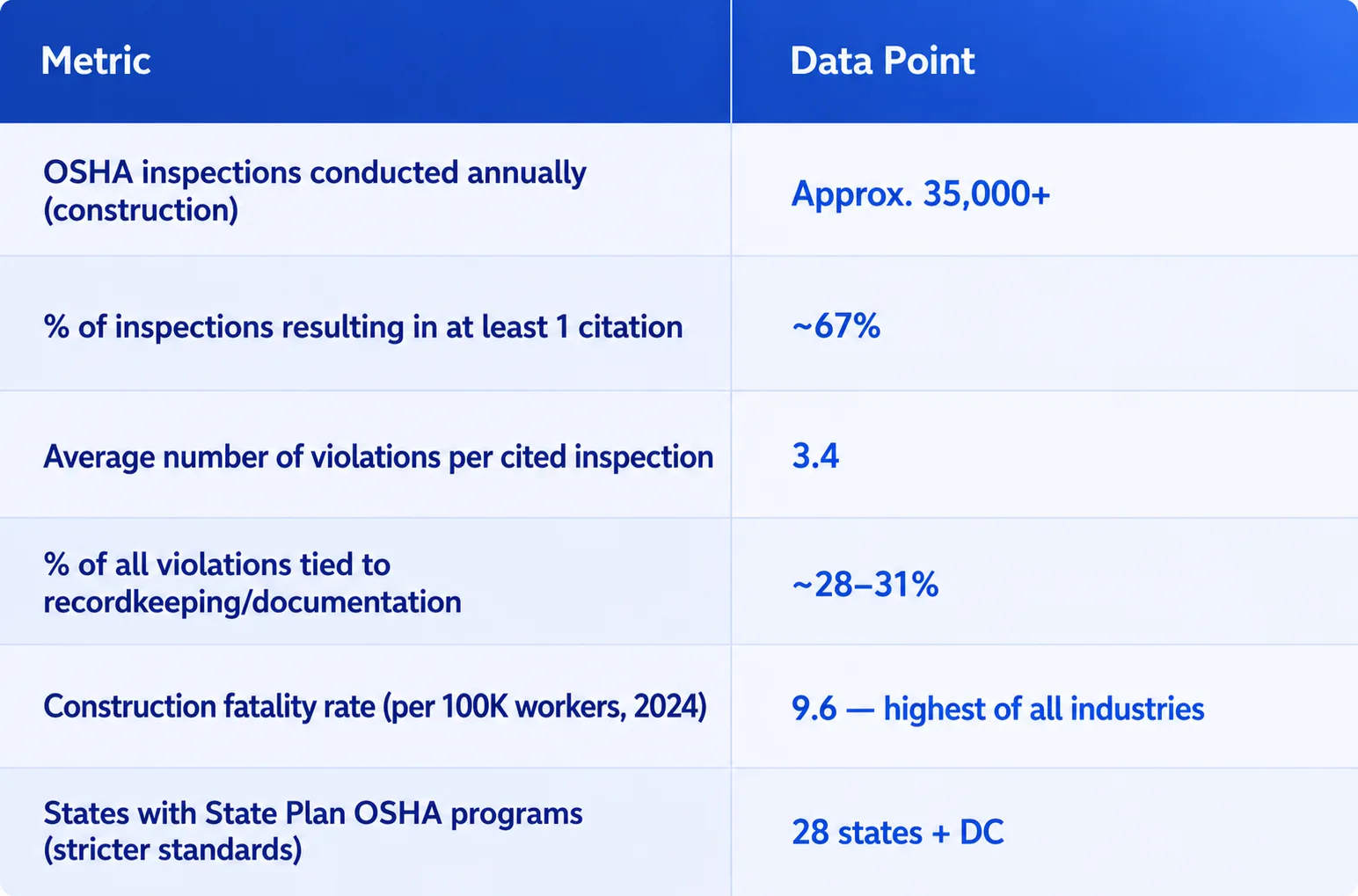

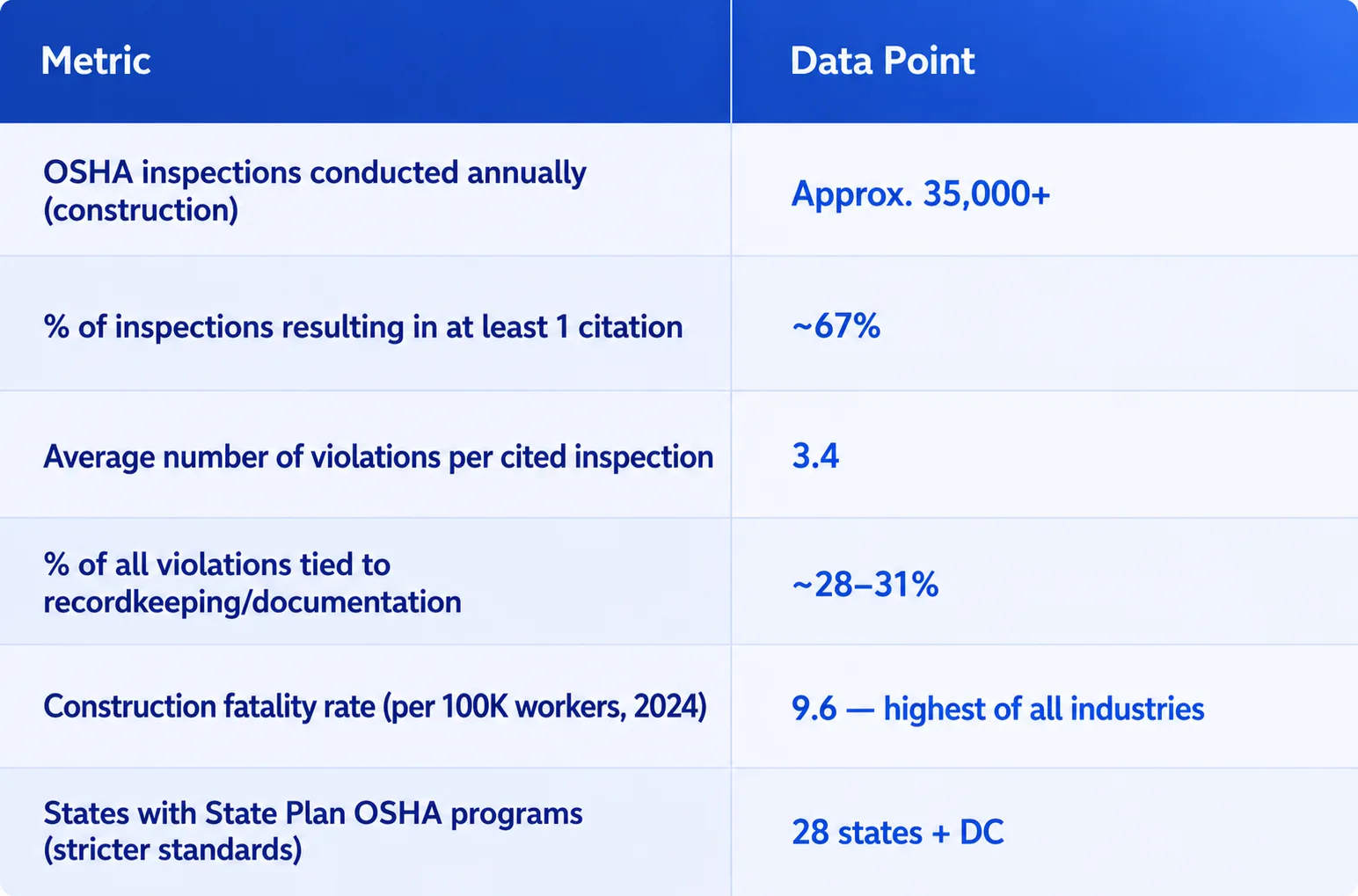

The Real Cost of OSHA Non-Compliance for U.S. Construction Firms

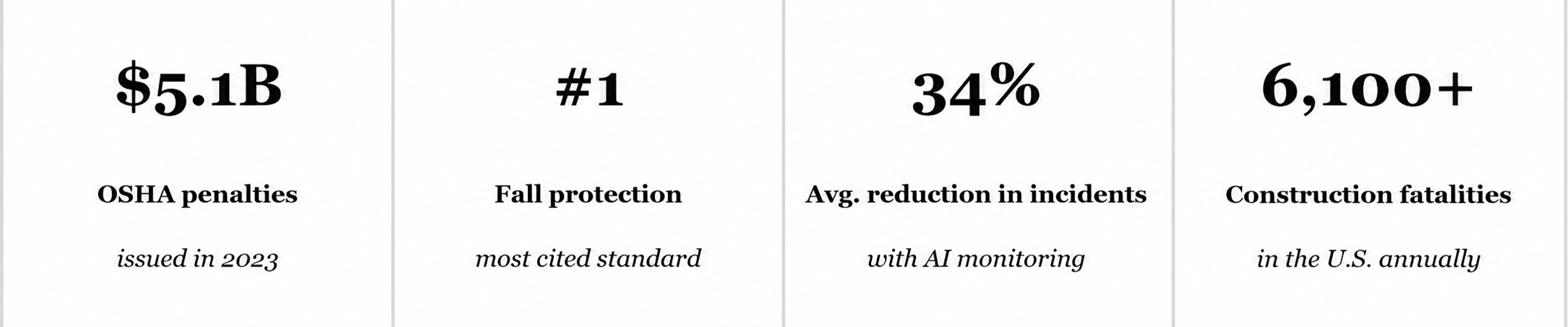

Before diving into how technology solves this, let's put numbers on the problem. According to OSHA's published penalty structure and enforcement data, the financial exposure for construction companies is significant and growing.

These numbers don't account for litigation exposure, reputational damage, or the project delays that follow a stop-work order. The financial argument for getting documentation right the first time is overwhelming — and the operational argument for doing it with AI solutions is becoming equally clear.

What OSHA Compliance Officers Actually Look For On-Site

Understanding what triggers citations helps clarify exactly where AI in Construction transforms exposure into protection. OSHA compliance officers prioritize documentation audits across these primary categories:

1. Hazard Communication (HazCom) — 29 CFR 1910.1200

Every chemical on your site must have a Safety Data Sheet. Every worker handling that chemical must have documented training. Compliance officers will ask workers directly — and the worker's answer must match what's in your training records.

2. Injury and Illness Recordkeeping — 29 CFR 1904

OSHA 300, 300A, and 301 logs must be maintained with accuracy. Any discrepancy between reported incidents and what workers describe during an inspection creates immediate escalation. Under-reporting is treated as a willful violation.

3. Fall Protection Training Records — 29 CFR 1926.503

For any worker exposed to fall hazards, training must be documented with the trainer's name, date, and the worker's acknowledgment. Verbal assurances that "everyone was trained" are not acceptable substitutes.

4. Equipment Inspection Logs — 29 CFR 1926.20

Cranes, scaffolding, aerial lifts, and powered equipment require documented pre-shift inspection logs. A missing log for a single piece of equipment on inspection day can escalate to a program-level citation.

5. Emergency Action Plans and Site Safety Plans

These must be current, site-specific, and accessible. A plan from a previous project pinned to the trailer wall is one of the fastest ways to receive a citation for a "deficient" safety program.

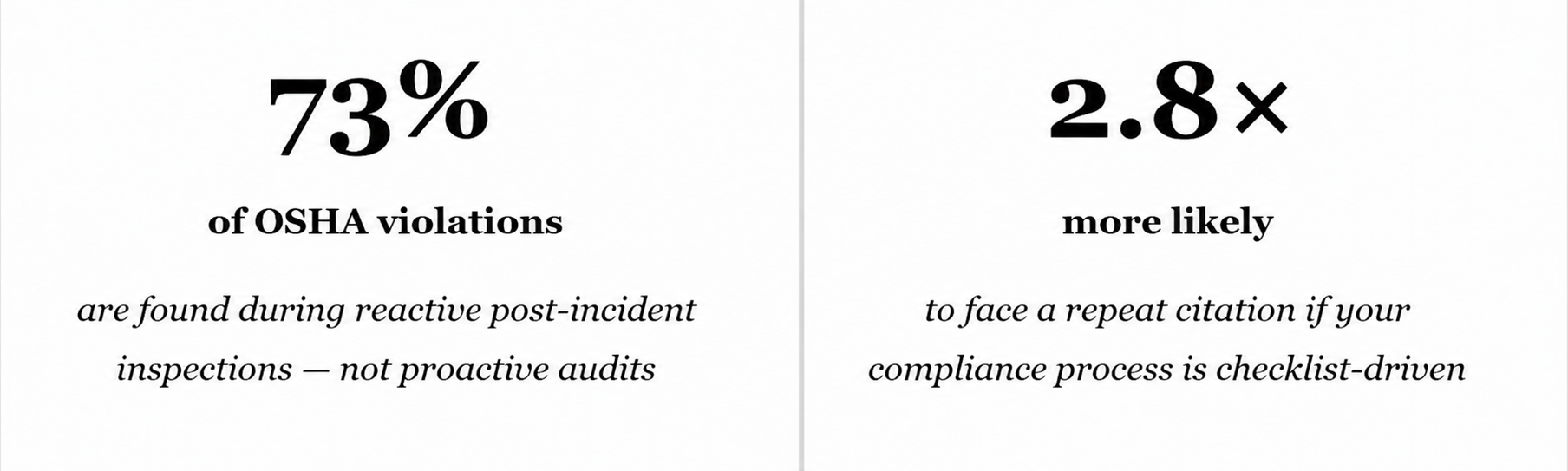

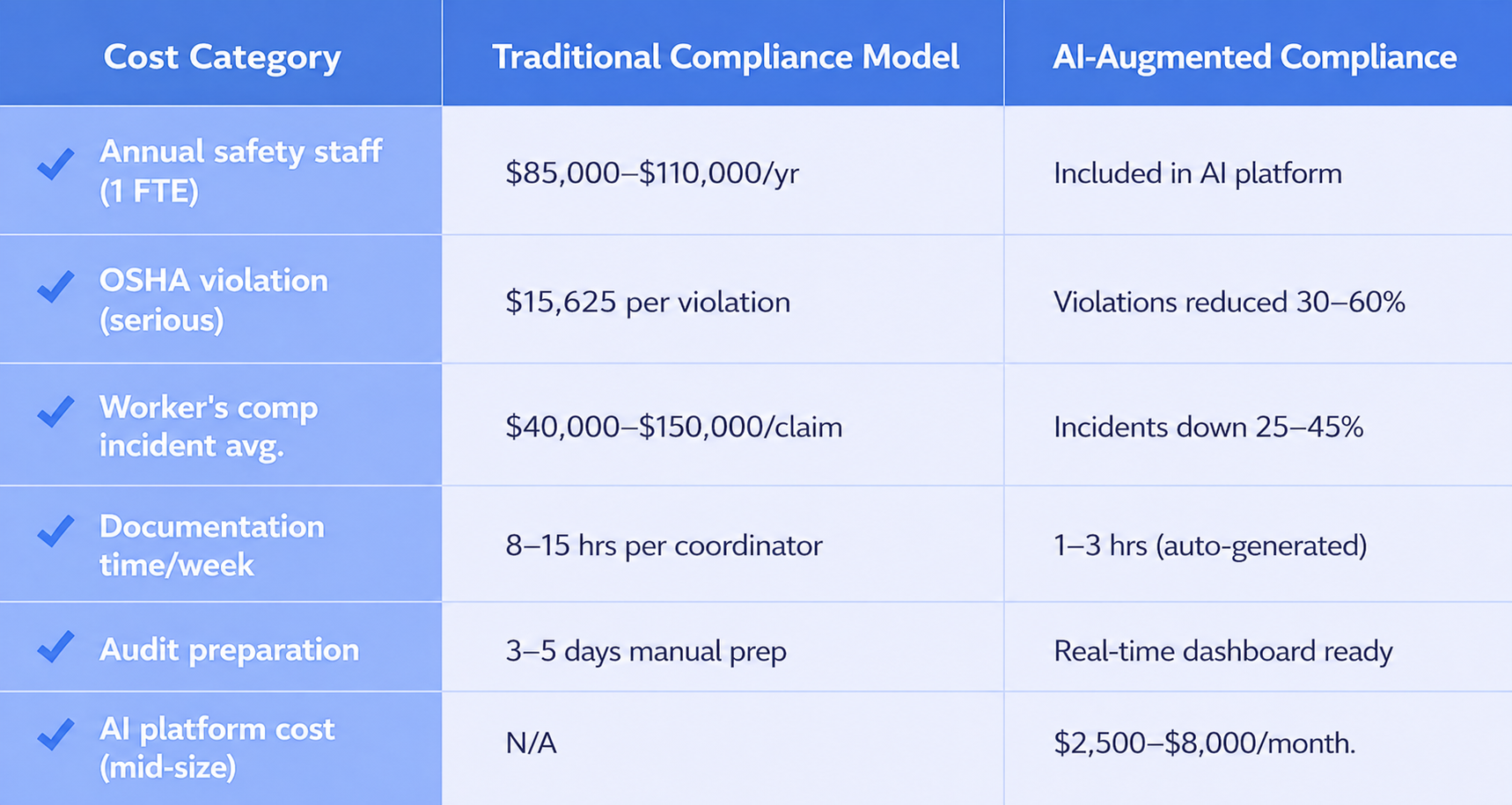

⚠️ Industry Insight: 73% of construction OSHA citations in 2024 were documentation failures, not physical safety failures. The hazard had been identified. The record simply wasn't there.

How AI in Construction Eliminates Documentation Gaps Before They Become Citations

This is where the operational shift happens. Traditional compliance management relies on safety managers manually collecting, organizing, and updating records across active job sites. With dozens of workers, rotating subcontractors, multiple equipment vendors, and state-specific regulatory variations, the manual approach creates structural gaps — not due to negligence, but due to volume.

Modern Artificial Intelligence in Construction compliance platforms address this at every layer:

Automated Incident Logging

Rather than relying on workers or supervisors to manually complete OSHA 301 forms within the required 7-day window, AI-powered systems capture incident data at the point of reporting — via mobile app, voice input, or structured digital forms — and auto-populate the correct OSHA record format. The system timestamps the submission, links it to the relevant project and jobsite, and flags any field that would fail a compliance review before the record is saved.

Training Certification Tracking with Expiry Alerts

Every worker's credentials — OSHA 10, OSHA 30, forklift certification, fall protection training, confined space entry — are maintained in a centralized, searchable database. When a certification approaches expiry, the worker, their supervisor, and the safety manager receive automated notifications. No worker enters a restricted area with expired credentials. The paper trail updates itself.

Equipment Inspection Record Automation

Pre-shift inspection checklists are completed digitally on mobile devices. Records are geo-tagged to the site, time-stamped, linked to the specific equipment asset, and archived automatically. If an inspection is missed, the system flags it within hours — not when an OSHA officer arrives.

LLM-Powered Compliance Guidance

The most advanced platforms now deploy an LLM model layer that interprets regulatory language in real time and provides site-specific guidance to safety managers. When a regulation changes — as OSHA rules frequently do — the platform updates its interpretation automatically and flags any existing documentation that needs revision. Safety managers stop researching compliance language manually and start receiving precise answers to their specific situations.

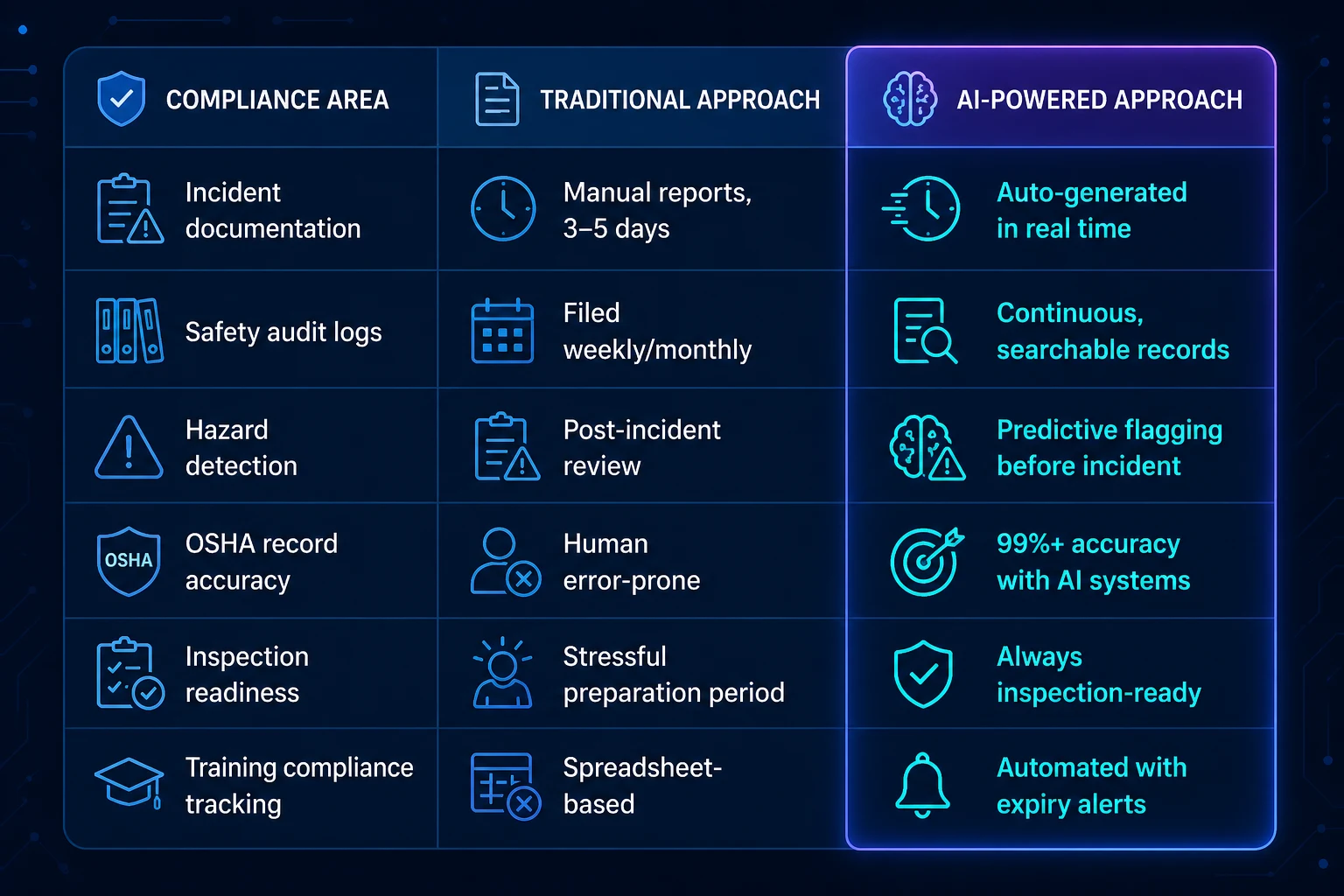

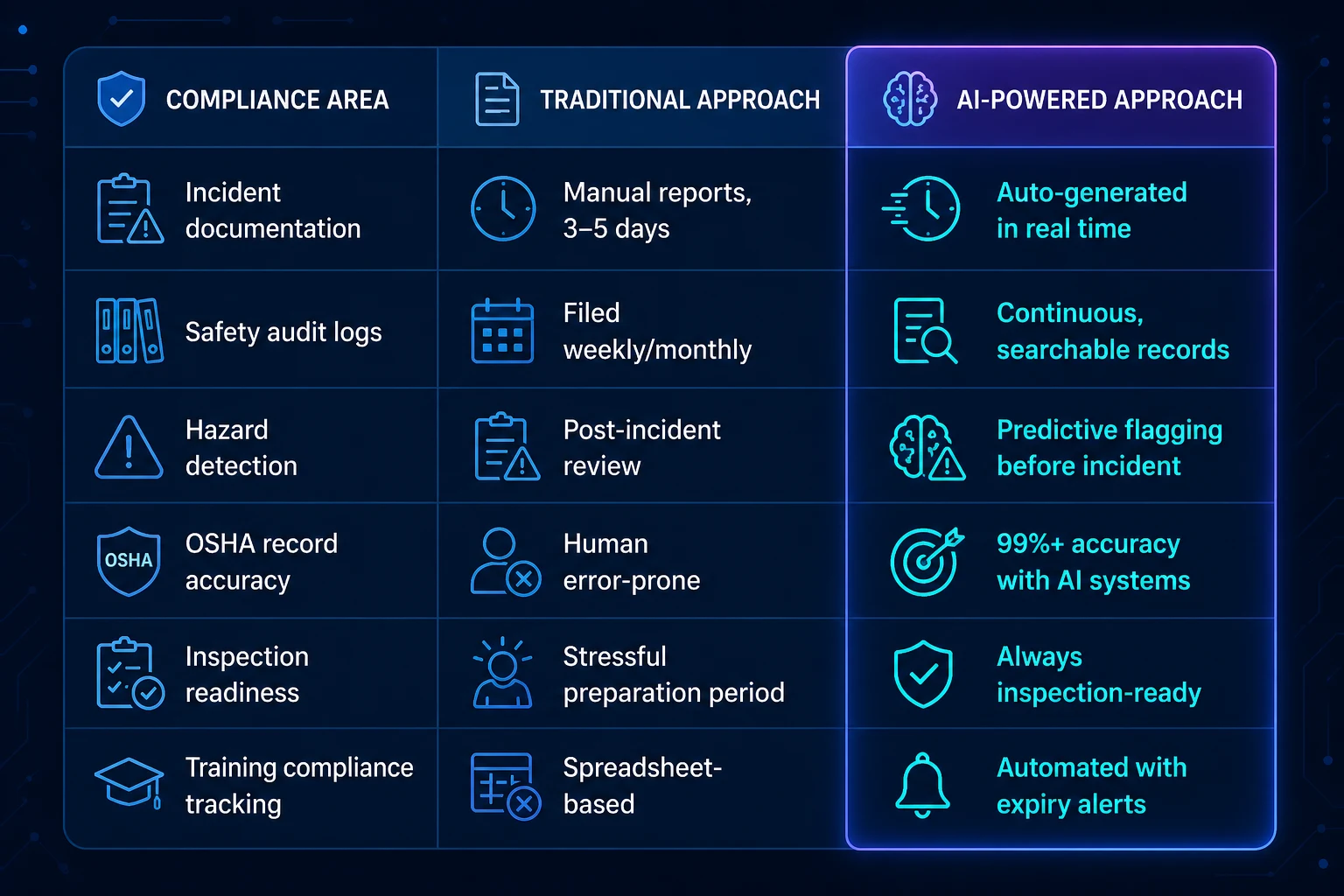

Traditional vs. AI-Powered Compliance: A Direct Comparison

For construction companies evaluating whether an investment in AI compliance infrastructure is justified, this side-by-side view reflects what firms across the U.S. are experiencing:

The pattern is consistent: AI-powered systems do not replace your safety program. They make your safety program provable.

The 5 Documentation Systems Every U.S. Construction Site Needs Right Now

Whether you are operating in Houston, Los Angeles, Chicago, or Charlotte, these five documentation systems are what OSHA compliance officers prioritize when they arrive at a construction site. If all five are automated, organized, and immediately producible, your inspection ends quickly. If any of them is incomplete, your inspection expands.

- Injury and Illness Log (OSHA 300 series) — maintained year-round, not reconstructed at year-end

- Hazard Communication Program with current SDS files for all chemicals on site

- Fall Protection Training Records with trainer credentials and worker acknowledgment signatures

- Equipment Inspection Logs — per-shift, per-asset, timestamped and retained for a minimum 3 years

- Site-Specific Emergency Action Plan updated for current project conditions, accessible within 30 seconds

Pro Note: OSHA compliance officers can request any of these records within minutes of arriving. If retrieval takes longer than 10–15 minutes, it signals a disorganized program — even if the records technically exist. Speed of retrieval is itself an audit factor.

NeuraMonks: Purpose-Built AI for Construction Compliance Workflows

NeuraMonks delivers AI systems specifically engineered for industries where documentation accuracy is non-negotiable. For construction firms operating under OSHA's regulatory framework, NeuraMonks has built compliance intelligence into every layer of the workflow — from incident capture to record retrieval to audit preparation.

What separates NeuraMonks from generic software platforms is the application of computer vision, natural language processing, and predictive analytics to the actual documentation workflows your safety teams use every day. The platform does not ask your team to learn new behavior. It automates the documentation that surrounds their existing behavior — and ensures that documentation holds up under inspection.

What NeuraMonks Delivers for Construction Safety Compliance

- Real-time incident documentation that meets OSHA 300-series format requirements automatically

- Computer vision inspection of equipment and site conditions with auto-logged results

- Worker credential management with multi-site visibility and certification expiry automation

- Regulatory update monitoring across all U.S. OSHA regional offices and federal standards

- Instant audit-ready document packages — producible in under 3 minutes for any inspection

- Integration with existing project management platforms including Procore, Autodesk Build, and Viewpoint

Why "We've Never Had a Problem" Is the Most Dangerous Compliance Strategy

The single most common reason construction companies delay investing in compliance automation is a clean history. If you haven't received a major citation in 3 years, the urgency feels abstract. But OSHA enforcement patterns in 2024 and 2025 tell a different story.

OSHA has increased its use of unprogrammed inspections — meaning officers arrive without a prior complaint or referral trigger. They are responding to industry-wide data suggesting that documentation practices have not kept pace with workforce growth and subcontractor complexity. Your site's clean record is a reflection of inspection timing, not documentation quality. The next inspection will test the documentation, not the culture.

Building a Compliance Infrastructure That Scales With Your Sites

For general contractors managing multiple active projects across different states, the compliance challenge compounds quickly. A crew working in California operates under Cal/OSHA standards that exceed federal requirements. A site in Texas operates under federal OSHA directly. A project in Washington State has its own Department of Labor & Industries framework.

Manual systems cannot track these variations reliably across a growing portfolio. AI in Construction compliance platforms can. The configuration layer of these systems maintains a regulatory ruleset for each active jurisdiction and applies the correct standard automatically to each site's documentation requirements. Your safety manager in Dallas is applying California compliance rules to the Pasadena project without needing to research them manually.

This is the core value proposition of bringing AI solutions into your compliance infrastructure: it removes the human bottleneck from a process that cannot afford human error.

Your Next Inspection Is Already Scheduled — Your Records Should Be, Too

OSHA doesn't announce inspections. They arrive. And when they do, the firms that walk through that process in under an hour — with full documentation, zero missing records, and zero citation exposure — are the firms that have systematically addressed the paper trail problem.

NeuraMonks works with construction firms across the United States to build compliance documentation systems that function 24/7, scale across every active site, and produce inspection-ready records at any moment. The gap between your current documentation process and an audit-proof one is not a personnel gap. It is a technology gap.

Your Compliance Record Has a Gap Right Now. You just don't know where it is yet — but an OSHA officer will.

Examine What NeuraMonks Has Delivered for Construction Safety Teams →

Schedule a Construction AI Compliance Scoping Call

Is Yours Ready?

Every construction site in America lives under a single compliance reality: OSHA doesn't walk your jobsite looking for the safety culture you've spent years building. They walk in looking for your paperwork — your SDS logs, your incident records, your training certifications, your hazard communication files. If those documents are missing, incomplete, or disorganized, the fine lands on your desk. Not on your safety philosophy.

For mid-to-large construction firms operating across multiple sites in states like Texas, California, Florida, and New York, the documentation burden is not a back-office problem. It is a frontline business risk. And in 2025, the companies that are passing OSHA inspections with zero citations are not the ones with the most dedicated safety managers — they are the ones that have automated the paper trail with AI in Construction systems that never miss a record, never lose a file, and never forget a deadline.

The Real Cost of OSHA Non-Compliance for U.S. Construction Firms

Before diving into how technology solves this, let's put numbers on the problem. According to OSHA's published penalty structure and enforcement data, the financial exposure for construction companies is significant and growing.

These numbers don't account for litigation exposure, reputational damage, or the project delays that follow a stop-work order. The financial argument for getting documentation right the first time is overwhelming — and the operational argument for doing it with AI solutions is becoming equally clear.

What OSHA Compliance Officers Actually Look For On-Site

Understanding what triggers citations helps clarify exactly where AI in Construction transforms exposure into protection. OSHA compliance officers prioritize documentation audits across these primary categories:

1. Hazard Communication (HazCom) — 29 CFR 1910.1200

Every chemical on your site must have a Safety Data Sheet. Every worker handling that chemical must have documented training. Compliance officers will ask workers directly — and the worker's answer must match what's in your training records.

2. Injury and Illness Recordkeeping — 29 CFR 1904

OSHA 300, 300A, and 301 logs must be maintained with accuracy. Any discrepancy between reported incidents and what workers describe during an inspection creates immediate escalation. Under-reporting is treated as a willful violation.

3. Fall Protection Training Records — 29 CFR 1926.503

For any worker exposed to fall hazards, training must be documented with the trainer's name, date, and the worker's acknowledgment. Verbal assurances that "everyone was trained" are not acceptable substitutes.

4. Equipment Inspection Logs — 29 CFR 1926.20

Cranes, scaffolding, aerial lifts, and powered equipment require documented pre-shift inspection logs. A missing log for a single piece of equipment on inspection day can escalate to a program-level citation.

5. Emergency Action Plans and Site Safety Plans

These must be current, site-specific, and accessible. A plan from a previous project pinned to the trailer wall is one of the fastest ways to receive a citation for a "deficient" safety program.

⚠️ Industry Insight: 73% of construction OSHA citations in 2024 were documentation failures, not physical safety failures. The hazard had been identified. The record simply wasn't there.

How AI in Construction Eliminates Documentation Gaps Before They Become Citations

This is where the operational shift happens. Traditional compliance management relies on safety managers manually collecting, organizing, and updating records across active job sites. With dozens of workers, rotating subcontractors, multiple equipment vendors, and state-specific regulatory variations, the manual approach creates structural gaps — not due to negligence, but due to volume.

Modern Artificial Intelligence in Construction compliance platforms address this at every layer:

Automated Incident Logging

Rather than relying on workers or supervisors to manually complete OSHA 301 forms within the required 7-day window, AI-powered systems capture incident data at the point of reporting — via mobile app, voice input, or structured digital forms — and auto-populate the correct OSHA record format. The system timestamps the submission, links it to the relevant project and jobsite, and flags any field that would fail a compliance review before the record is saved.

Training Certification Tracking with Expiry Alerts

Every worker's credentials — OSHA 10, OSHA 30, forklift certification, fall protection training, confined space entry — are maintained in a centralized, searchable database. When a certification approaches expiry, the worker, their supervisor, and the safety manager receive automated notifications. No worker enters a restricted area with expired credentials. The paper trail updates itself.

Equipment Inspection Record Automation

Pre-shift inspection checklists are completed digitally on mobile devices. Records are geo-tagged to the site, time-stamped, linked to the specific equipment asset, and archived automatically. If an inspection is missed, the system flags it within hours — not when an OSHA officer arrives.

LLM-Powered Compliance Guidance

The most advanced platforms now deploy an LLM model layer that interprets regulatory language in real time and provides site-specific guidance to safety managers. When a regulation changes — as OSHA rules frequently do — the platform updates its interpretation automatically and flags any existing documentation that needs revision. Safety managers stop researching compliance language manually and start receiving precise answers to their specific situations.

Traditional vs. AI-Powered Compliance: A Direct Comparison

For construction companies evaluating whether an investment in AI compliance infrastructure is justified, this side-by-side view reflects what firms across the U.S. are experiencing:

The pattern is consistent: AI-powered systems do not replace your safety program. They make your safety program provable.

The 5 Documentation Systems Every U.S. Construction Site Needs Right Now

Whether you are operating in Houston, Los Angeles, Chicago, or Charlotte, these five documentation systems are what OSHA compliance officers prioritize when they arrive at a construction site. If all five are automated, organized, and immediately producible, your inspection ends quickly. If any of them is incomplete, your inspection expands.

- Injury and Illness Log (OSHA 300 series) — maintained year-round, not reconstructed at year-end

- Hazard Communication Program with current SDS files for all chemicals on site

- Fall Protection Training Records with trainer credentials and worker acknowledgment signatures

- Equipment Inspection Logs — per-shift, per-asset, timestamped and retained for a minimum 3 years

- Site-Specific Emergency Action Plan updated for current project conditions, accessible within 30 seconds

Pro Note: OSHA compliance officers can request any of these records within minutes of arriving. If retrieval takes longer than 10–15 minutes, it signals a disorganized program — even if the records technically exist. Speed of retrieval is itself an audit factor.

NeuraMonks: Purpose-Built AI for Construction Compliance Workflows

NeuraMonks delivers AI systems specifically engineered for industries where documentation accuracy is non-negotiable. For construction firms operating under OSHA's regulatory framework, NeuraMonks has built compliance intelligence into every layer of the workflow — from incident capture to record retrieval to audit preparation.

What separates NeuraMonks from generic software platforms is the application of computer vision, natural language processing, and predictive analytics to the actual documentation workflows your safety teams use every day. The platform does not ask your team to learn new behavior. It automates the documentation that surrounds their existing behavior — and ensures that documentation holds up under inspection.

What NeuraMonks Delivers for Construction Safety Compliance

- Real-time incident documentation that meets OSHA 300-series format requirements automatically

- Computer vision inspection of equipment and site conditions with auto-logged results

- Worker credential management with multi-site visibility and certification expiry automation

- Regulatory update monitoring across all U.S. OSHA regional offices and federal standards

- Instant audit-ready document packages — producible in under 3 minutes for any inspection

- Integration with existing project management platforms including Procore, Autodesk Build, and Viewpoint

Why "We've Never Had a Problem" Is the Most Dangerous Compliance Strategy

The single most common reason construction companies delay investing in compliance automation is a clean history. If you haven't received a major citation in 3 years, the urgency feels abstract. But OSHA enforcement patterns in 2024 and 2025 tell a different story.

OSHA has increased its use of unprogrammed inspections — meaning officers arrive without a prior complaint or referral trigger. They are responding to industry-wide data suggesting that documentation practices have not kept pace with workforce growth and subcontractor complexity. Your site's clean record is a reflection of inspection timing, not documentation quality. The next inspection will test the documentation, not the culture.

Building a Compliance Infrastructure That Scales With Your Sites

For general contractors managing multiple active projects across different states, the compliance challenge compounds quickly. A crew working in California operates under Cal/OSHA standards that exceed federal requirements. A site in Texas operates under federal OSHA directly. A project in Washington State has its own Department of Labor & Industries framework.

Manual systems cannot track these variations reliably across a growing portfolio. AI in Construction compliance platforms can. The configuration layer of these systems maintains a regulatory ruleset for each active jurisdiction and applies the correct standard automatically to each site's documentation requirements. Your safety manager in Dallas is applying California compliance rules to the Pasadena project without needing to research them manually.

This is the core value proposition of bringing AI solutions into your compliance infrastructure: it removes the human bottleneck from a process that cannot afford human error.

Your Next Inspection Is Already Scheduled — Your Records Should Be, Too

OSHA doesn't announce inspections. They arrive. And when they do, the firms that walk through that process in under an hour — with full documentation, zero missing records, and zero citation exposure — are the firms that have systematically addressed the paper trail problem.

NeuraMonks works with construction firms across the United States to build compliance documentation systems that function 24/7, scale across every active site, and produce inspection-ready records at any moment. The gap between your current documentation process and an audit-proof one is not a personnel gap. It is a technology gap.

Your Compliance Record Has a Gap Right Now. You just don't know where it is yet — but an OSHA officer will.

Examine What NeuraMonks Has Delivered for Construction Safety Teams →

Schedule a Construction AI Compliance Scoping Call

AI for OSHA Compliance: How Smart Contractors Are Reducing Risk Without Growing Their Safety Team

Contractors using AI vision systems to automate OSHA compliance are reducing violations, avoiding penalties, and improving EMR scores—without hiring more safety staff.

There is a quiet revolution happening on U.S. job sites. It does not involve adding a dozen safety officers or burying crews in more paperwork. It involves plugging in computer vision cameras, connecting them to a compliance engine, and letting AI in Construction do the continuous watching that no human team can sustain across a 10-acre site at 6 AM.

OSHA's penalty structure has never been steeper. A single willful violation now carries fines up to $156,259. Repeat citations compound fast. For mid-size contractors running 4–8 active projects, the risk is existential — not just financial. Yet the traditional response (hire more safety staff) is both expensive and slow. The smarter contractors are choosing a third path.

Why Traditional Compliance Methods Are Breaking Down

Safety management on construction sites has historically relied on periodic walkthroughs, manual checklists, and reactive incident reports. The fundamental problem: a safety manager can only be in one place at one time. On a large commercial project with 200+ workers across multiple floors and trades, continuous human oversight is mathematically impossible.

The compliance gap is real. OSHA's own data shows that the majority of violations are discovered after something goes wrong — a fall, a struck-by incident, a scaffold failure. At that point, the fine is the least of your problems. Worker's comp claims, project delays, litigation, and reputational damage can cost 10–50x the original penalty.

We had good safety culture but a terrible visibility problem. We couldn't see what we couldn't see.

— Safety Director, top-20 U.S. general contractor

What AI in Construction Actually Looks Like for Compliance

AI in Construction compliance is not a futuristic concept. It is deployable today, and contractors across the U.S. are using it on active projects. Here is what the technology stack looks like in practice:

Core AI Compliance Capabilities — 2024 Deployments

1. Real-Time PPE Detection — Cameras identify missing hard hats, vests, gloves, and eye protection. Workers are flagged within seconds, not hours.

2. Hazardous Zone Monitoring — Geofencing and computer vision alert supervisors when workers enter exclusion zones without authorization or proper equipment.

3. Fall Risk Analysis — Models detect unprotected edges, missing guardrails, and improper ladder use. Alerts are issued before an incident occurs

4. Automated OSHA Documentation — Incident logs, near-miss reports, and inspection records are generated automatically from sensor and camera data, reducing manual documentation time by up to 80%.

5. Predictive Risk Scoring — Machine learning models score each work zone daily based on crew density, task type, weather, and historical incident patterns — helping you deploy safety resources where they are needed most.

The Real Cost of Non-Compliance vs. the Cost of AI Implementation

Decision-makers often frame this as a budget question. The actual math points firmly in one direction.

The ROI calculation is not close. A contractor running 5 projects who avoids 3 serious OSHA citations per year ($46,875 saved) and 1 worker's comp claim ($75,000 average) is already clearing $120,000+ in avoided costs against a platform investment that typically runs $5,000–$8,000/month across all sites. That is a positive return inside the first quarter.

OSHA's Most Cited Standards — and How AI Addresses Each One

OSHA publishes its most-cited violations annually. For FY2023, the top 10 for construction were dominated by four categories. Here is how AI solutions map to each:

When AI in Construction is deployed at this level of specificity, safety teams shift from reactive fire-fighting to proactive oversight. One coordinator can effectively monitor what previously required three dedicated walkers on a large site.

How NeuraMonks Builds Compliance Intelligence for Contractors

NeuraMonks is not a generic SaaS vendor. As a specialized AI development company focused on computer vision and industrial AI, NeuraMonks designs compliance systems built around how construction sites actually operate — not how a software demo assumes they do.

The difference is meaningful. Off-the-shelf safety platforms apply generic models trained on warehouse or manufacturing footage. Construction environments are dynamic: lighting changes by the hour, crews rotate across zones, PPE varies by trade, and site layouts change weekly. Generic models produce false positives that crews learn to ignore — which is worse than having no system at all.

What NeuraMonks Delivers for Contractors

• Custom-trained vision models on your site footage — not generic datasets

• OSHA standard-specific detection logic (fall protection, scaffolding, struck-by)

• Integration with your existing camera infrastructure — no rip-and-replace

• Automated OSHA-ready documentation and incident audit trails

• Dashboard visibility for project owners, safety directors, and site supers — all in one place

A Field-Tested Deployment: From Pilot to Portfolio Rollout

The pattern we see consistently among U.S. contractors who adopt AI compliance systems follows three phases:

Phase 1 — Pilot Project (Weeks 1–6)

One active project is instrumented with AI cameras and the compliance engine. The team runs parallel operations: existing safety processes continue while AI data is collected. By week 4, the gap between what the human walkthroughs catch and what the AI detects is usually striking enough to build internal buy-in.

Phase 2 — Calibration & Integration (Weeks 6–12)

The AI models are refined based on actual site conditions. Alert thresholds are tuned to reduce noise. OSHA documentation workflows are connected to the platform. Safety coordinators shift from walkthroughs to monitoring and exception-handling.

Phase 3 — Portfolio Expansion (Month 3+)

Once the pilot demonstrates a measurable reduction in near-miss events and citation risk, the same infrastructure is deployed across all active projects. The unit economics improve significantly at scale — the AI platform cost per project decreases while protection increases.

By month three, our safety coordinator was managing compliance across four sites instead of one. The AI handled the constant monitoring. She handled the decisions.

— VP of Operations, Southeast commercial GC

Answering the Questions Safety Directors Actually Ask

Will crews resist the cameras?

Initial resistance is real but short-lived when the framing is right. The AI is not surveillance for discipline purposes — it is an early-warning system that protects workers. Most crews, once they understand the system flags risks before incidents happen, become advocates. Frame the rollout around worker protection, not compliance enforcement.

What happens when the AI makes a false positive?

Well-designed systems — like those built by NeuraMonks — include confidence thresholds and human-in-the-loop review for any alert that triggers documentation. False positives are flagged to the safety coordinator, not automatically recorded in OSHA logs. The system improves with every reviewed alert through active learning.

Do we need new cameras or infrastructure?

In most deployments, no. AI compliance platforms integrate with existing IP camera networks. If your sites already have CCTV for security, the same hardware can be repurposed. New edge-compute devices process video locally, so footage does not need to leave the site for analysis, which addresses both bandwidth and privacy concerns.

How long before we see measurable results?

Contractors typically see the first data within 48–72 hours of deployment. Measurable reduction in near-miss events is observable within 30 days. Documentation hours drop immediately. OSHA citation risk reduction is measurable after the first full audit cycle.

The Competitive Advantage You Are Not Talking About Yet

There is a dimension of this conversation that goes beyond penalty avoidance. Increasingly, large project owners and general contractors are asking subcontractors about their safety technology stack as a prequalification factor. EMR (Experience Modification Rate) is a direct input into bonding capacity and bid competitiveness.

Contractors who deploy AI solutions today are building a documented safety record that compounds over time — lower EMR, better bonding rates, access to larger projects, and a hiring advantage with safety-conscious workers. The compliance benefit is just the beginning.

Your Next OSHA Audit Doesn't Have to Be a Gamble

NeuraMonks engineers compliance intelligence built for how your crews actually work — not how consultants think they should.

Examine Delivered Case Studies | Schedule a Scoping Call

There is a quiet revolution happening on U.S. job sites. It does not involve adding a dozen safety officers or burying crews in more paperwork. It involves plugging in computer vision cameras, connecting them to a compliance engine, and letting AI in Construction do the continuous watching that no human team can sustain across a 10-acre site at 6 AM.

OSHA's penalty structure has never been steeper. A single willful violation now carries fines up to $156,259. Repeat citations compound fast. For mid-size contractors running 4–8 active projects, the risk is existential — not just financial. Yet the traditional response (hire more safety staff) is both expensive and slow. The smarter contractors are choosing a third path.

Why Traditional Compliance Methods Are Breaking Down

Safety management on construction sites has historically relied on periodic walkthroughs, manual checklists, and reactive incident reports. The fundamental problem: a safety manager can only be in one place at one time. On a large commercial project with 200+ workers across multiple floors and trades, continuous human oversight is mathematically impossible.

The compliance gap is real. OSHA's own data shows that the majority of violations are discovered after something goes wrong — a fall, a struck-by incident, a scaffold failure. At that point, the fine is the least of your problems. Worker's comp claims, project delays, litigation, and reputational damage can cost 10–50x the original penalty.

We had good safety culture but a terrible visibility problem. We couldn't see what we couldn't see.

— Safety Director, top-20 U.S. general contractor

What AI in Construction Actually Looks Like for Compliance

AI in Construction compliance is not a futuristic concept. It is deployable today, and contractors across the U.S. are using it on active projects. Here is what the technology stack looks like in practice:

Core AI Compliance Capabilities — 2024 Deployments

1. Real-Time PPE Detection — Cameras identify missing hard hats, vests, gloves, and eye protection. Workers are flagged within seconds, not hours.

2. Hazardous Zone Monitoring — Geofencing and computer vision alert supervisors when workers enter exclusion zones without authorization or proper equipment.

3. Fall Risk Analysis — Models detect unprotected edges, missing guardrails, and improper ladder use. Alerts are issued before an incident occurs

4. Automated OSHA Documentation — Incident logs, near-miss reports, and inspection records are generated automatically from sensor and camera data, reducing manual documentation time by up to 80%.

5. Predictive Risk Scoring — Machine learning models score each work zone daily based on crew density, task type, weather, and historical incident patterns — helping you deploy safety resources where they are needed most.

The Real Cost of Non-Compliance vs. the Cost of AI Implementation

Decision-makers often frame this as a budget question. The actual math points firmly in one direction.

The ROI calculation is not close. A contractor running 5 projects who avoids 3 serious OSHA citations per year ($46,875 saved) and 1 worker's comp claim ($75,000 average) is already clearing $120,000+ in avoided costs against a platform investment that typically runs $5,000–$8,000/month across all sites. That is a positive return inside the first quarter.

OSHA's Most Cited Standards — and How AI Addresses Each One

OSHA publishes its most-cited violations annually. For FY2023, the top 10 for construction were dominated by four categories. Here is how AI solutions map to each:

When AI in Construction is deployed at this level of specificity, safety teams shift from reactive fire-fighting to proactive oversight. One coordinator can effectively monitor what previously required three dedicated walkers on a large site.

How NeuraMonks Builds Compliance Intelligence for Contractors

NeuraMonks is not a generic SaaS vendor. As a specialized AI development company focused on computer vision and industrial AI, NeuraMonks designs compliance systems built around how construction sites actually operate — not how a software demo assumes they do.

The difference is meaningful. Off-the-shelf safety platforms apply generic models trained on warehouse or manufacturing footage. Construction environments are dynamic: lighting changes by the hour, crews rotate across zones, PPE varies by trade, and site layouts change weekly. Generic models produce false positives that crews learn to ignore — which is worse than having no system at all.

What NeuraMonks Delivers for Contractors

• Custom-trained vision models on your site footage — not generic datasets

• OSHA standard-specific detection logic (fall protection, scaffolding, struck-by)

• Integration with your existing camera infrastructure — no rip-and-replace

• Automated OSHA-ready documentation and incident audit trails

• Dashboard visibility for project owners, safety directors, and site supers — all in one place

A Field-Tested Deployment: From Pilot to Portfolio Rollout

The pattern we see consistently among U.S. contractors who adopt AI compliance systems follows three phases:

Phase 1 — Pilot Project (Weeks 1–6)

One active project is instrumented with AI cameras and the compliance engine. The team runs parallel operations: existing safety processes continue while AI data is collected. By week 4, the gap between what the human walkthroughs catch and what the AI detects is usually striking enough to build internal buy-in.

Phase 2 — Calibration & Integration (Weeks 6–12)

The AI models are refined based on actual site conditions. Alert thresholds are tuned to reduce noise. OSHA documentation workflows are connected to the platform. Safety coordinators shift from walkthroughs to monitoring and exception-handling.

Phase 3 — Portfolio Expansion (Month 3+)

Once the pilot demonstrates a measurable reduction in near-miss events and citation risk, the same infrastructure is deployed across all active projects. The unit economics improve significantly at scale — the AI platform cost per project decreases while protection increases.

By month three, our safety coordinator was managing compliance across four sites instead of one. The AI handled the constant monitoring. She handled the decisions.

— VP of Operations, Southeast commercial GC

Answering the Questions Safety Directors Actually Ask

Will crews resist the cameras?

Initial resistance is real but short-lived when the framing is right. The AI is not surveillance for discipline purposes — it is an early-warning system that protects workers. Most crews, once they understand the system flags risks before incidents happen, become advocates. Frame the rollout around worker protection, not compliance enforcement.

What happens when the AI makes a false positive?

Well-designed systems — like those built by NeuraMonks — include confidence thresholds and human-in-the-loop review for any alert that triggers documentation. False positives are flagged to the safety coordinator, not automatically recorded in OSHA logs. The system improves with every reviewed alert through active learning.

Do we need new cameras or infrastructure?

In most deployments, no. AI compliance platforms integrate with existing IP camera networks. If your sites already have CCTV for security, the same hardware can be repurposed. New edge-compute devices process video locally, so footage does not need to leave the site for analysis, which addresses both bandwidth and privacy concerns.

How long before we see measurable results?

Contractors typically see the first data within 48–72 hours of deployment. Measurable reduction in near-miss events is observable within 30 days. Documentation hours drop immediately. OSHA citation risk reduction is measurable after the first full audit cycle.

The Competitive Advantage You Are Not Talking About Yet