TABLE OF CONTENT

Seven clinical applications that are no longer experiments. They are running inside hospitals across the US, India, UK, Mexico and emerging markets today.

Every 36 seconds, someone in the United States dies from cardiovascular disease. 422 million people worldwide suffer with diabetes. Healthcare systems from London to Lagos are stretched thin — too few specialists, too many patients, too little time per decision. AI in healthcare does not solve every part of that problem. But it addresses the most dangerous bottlenecks: the missed scan, the late sepsis flag, and the medication that should never have been prescribed. These seven use cases are where that shift is actually happening.

01 Early Disease Detection and Diagnostic Imaging

A 2020 study in Nature Medicine showed that an AI system detected breast cancer more accurately than a panel of six radiologists — reducing missed diagnoses by 9.4%. That single number represents a structural change in how diagnostic medicine works when AI is present. The technology no longer assists specialists; in certain contexts, it outperforms them.

Today, AI tools read diabetic retinopathy from eye scans, surface lung nodules on CT scans within seconds of acquisition, and flag stroke-indicating anomalies in brain MRIs before a radiologist opens the queue. For hospitals in underserved regions — rural England, sub-Saharan Africa, tier-3 cities across South and Southeast Asia — where specialist coverage is thin, this kind of AI solution changes the clinical calculus entirely.

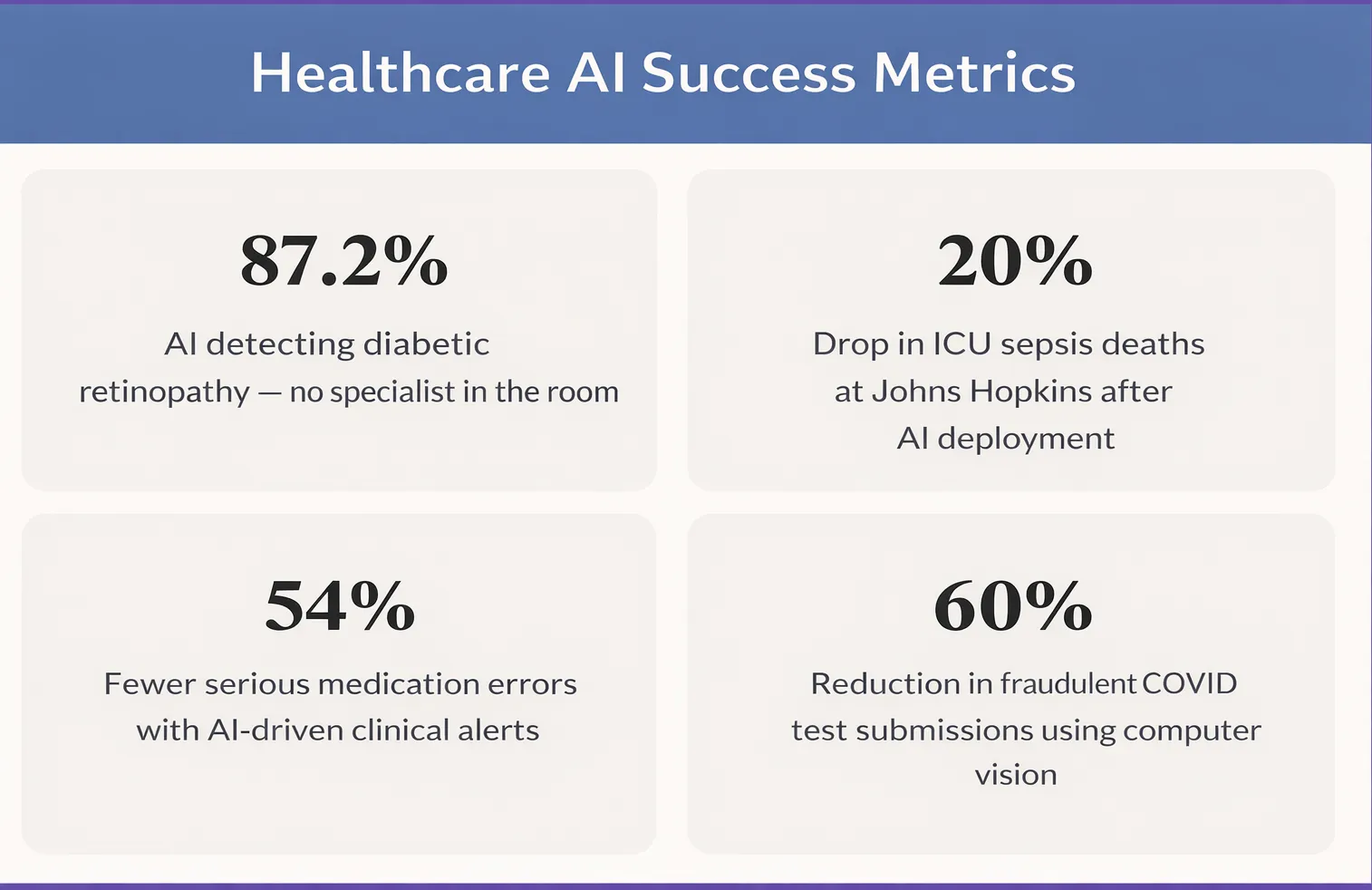

IDx-DR, the first FDA-authorised autonomous AI diagnostic system, detects diabetic retinopathy at 87.2% sensitivity without a specialist present.— IDx-DR Clinical Data

Faster turnaround. Fewer missed cases. Critical scans that automatically surface to the top of the queue. These are not incremental gains. They are fundamental changes to what a hospital can do with the staff it already has.

02 Predicting Sepsis Before It Becomes Fatal

Sepsis kills more Americans annually than prostate cancer, breast cancer, and AIDS combined. Globally, it causes around 11 million deaths per year — the majority in low- and middle-income countries. The clinical challenge is that early sepsis looks ordinary: mild fever, elevated heart rate, slight fatigue. By the time it looks like sepsis, the window has often closed.

AI models trained on electronic health records — combining vital signs, lab results, nursing notes, and medication history — now flag high-risk patients up to six hours before deterioration is visible. Johns Hopkins deployed one such AI solution and recorded a 20% reduction in ICU sepsis mortality. Epic and Cerner, the two largest hospital software platforms globally, both include native AI-powered sepsis alerts. The infrastructure is already inside hundreds of hospitals. The question is how well the models are tuned to each institution's patient population.

Every hour of delayed sepsis treatment increases mortality by 7%. AI-driven early warning systems give clinical teams back those critical hours — without requiring additional staff or equipment.

Stop Planning AI.

Start Profiting From It.

Every day without intelligent automation costs you revenue, market share, and momentum. Get a custom AI roadmap with clear value projections and measurable returns for your business.

03 Catching Medication Errors Before They Reach the Patient

Medication errors harm approximately 1.5 million people in the United States alone every year. Globally, the WHO estimates that unsafe medication practices cause 1.3 million years of healthy life lost annually. Most of these errors are not caused by negligence — they happen because a nurse is managing eight patients at once, or a physician is entering orders under pressure, or a critical allergy note is buried three screens deep in a legacy system.

AI-powered clinical decision support catches what humans miss in those conditions:

- Drug-drug interaction alerts

- Dosage errors by weight or kidney function

- Allergy conflicts buried in old records

- Look-alike/sound-alike drug confusion

A JAMA study found AI-driven alerts reduced serious medication errors by 54% compared to older rule-based systems. The critical design shift was smarter alerting, not louder alerting — irrelevant alerts filtered out so clinicians stopped dismissing them.

04 Virtual Health Assistants and Patient Engagement

Not every life-saving moment happens inside an ICU. Many happen — or fail to happen — in the weeks between appointments. No-show rates for outpatient appointments run between 18–40% across healthcare systems globally. For patients managing diabetes or hypertension, a missed visit means missed labs, missed medication adjustments, and eventually a hospitalisation that was entirely avoidable.

AI-powered virtual assistants send personalised reminders via SMS or messaging apps, conduct pre-visit intake in multiple languages, follow up on discharge instructions, and answer common post-procedure questions around the clock. For patients in underserved communities without easy access to primary care, a 24/7 AI triage tool often provides the only immediate clinical guidance available.

AI consulting services are increasingly helping health systems design patient engagement workflows — not just deploy tools. The difference between a chatbot and an effective patient journey is the clinical logic built around it.

05 Computer Vision in Clinical Assessment

Some of the most significant advances in AI in healthcare are happening in areas that rarely make headlines: the routine measurements that clinical teams repeat hundreds of times a week. Wound assessment is one of them. Traditional ruler-based wound measurement is time-consuming, subjective, and varies significantly between clinicians — limiting a hospital's ability to track healing consistently or compare outcomes across facilities.

Deep learning systems now perform this work from a standard smartphone photograph. Computer vision models segment the wound boundary, detect a calibration marker, correct for camera angle and perspective, and output clinically accurate centimetre-based measurements of area, perimeter, width, and height — in seconds, without manual intervention.

Neuramonks Case Study

Automated Wound Detection & Measurement System

A healthcare client needed to replace subjective, ruler-based wound measurements with a standardised, scalable system. Neuramonks built an end-to-end AI pipeline using Attention U-Net deep learning segmentation combined with a green calibration marker for real-world scale reference. The system delivers wound measurements with under 5% error compared to expert manual assessment — and works from standard RGB images, making it viable for both clinical and remote monitoring settings.

- 55–65%reduction in clinician measurement time

- 30–40%improvement in cross-clinician consistency

- < 5% error vs expert manual measurements

See the full case study →

The same computer vision approach applies across a wide range of clinical imaging tasks — from surgical site monitoring to dermatology screening — wherever visual assessment has historically depended on a clinician being physically present.

06 Pathology Automation and Diagnostic Verification

Laboratory medicine is one of the most data-intensive disciplines in healthcare and one of the most dependent on human visual analysis. A trained haematology technician reads blood smears for hours, looking for abnormal cells, infection markers, and parasitic invasion. The work is skilled, repetitive, and subject to fatigue-related error — particularly in high-volume labs in tropical regions where malaria and other parasitic infections drive enormous diagnostic demand.

AI in healthcare is automating this pipeline end-to-end: detecting, classifying, and counting blood cells from microscopic images with speed and consistency no human team can match at scale.

Neuramonks Case Study

AI-Powered Blood Cell and Malaria Detection System

A diagnostics client serving high-incidence regions needed to reduce the bottleneck of manual blood smear analysis. Neuramonks delivered an end-to-end deep learning system that performs instance segmentation of red blood cells, white blood cells, and platelets — including a dedicated module for detecting Plasmodium-infected RBCs. The system handles overlapping and clustered cells and runs from image ingestion to results in seconds, compatible with existing microscopy and laboratory information systems.

- 50–60%reduction in technician analysis time

- 30–35%improvement in diagnostic consistency

- 25–40%fewer missed malaria cases

See the full case study →

Beyond malaria, the same architecture applies to haematological cancers, anaemia screening, and platelet disorder diagnosis. AI consulting services built around these systems help labs define the right model architecture, training data strategy, and deployment approach for their specific clinical environment.

The same principle of AI-driven authenticity and verification extends to public health programmes. When Corona Test UK needed to verify COVID test results for airline passengers at scale — preventing manipulation and fraud while maintaining speed — a computer vision pipeline was the only viable answer at that volume.

07 AI-Powered Clinical Decision Support for Glaucoma Management

Glaucoma is the leading cause of irreversible blindness globally, affecting over 80 million people. Yet diagnosis requires analysing a web of complex variables — intraocular pressure history, optic nerve morphology, visual field progression, patient age, and longitudinal trends across dozens of visits. Even experienced ophthalmologists face diagnostic ambiguity when these signals conflict.

A standard prediction model is not sufficient for this clinical environment. What ophthalmologists need is a system that separates initial diagnosis from validation — so that confidence is explicitly scored and every recommendation is independently verified before it influences a treatment plan.

Case Study: AI Clinical Decision Support — Glaucoma Management Platform — Neuramonks

Neuramonks built a multi-agent clinical decision support platform for ophthalmologists, using a dual-agent architecture on Deerflow. A Diagnostic Agent processes patient data against a large historical repository to stage disease and generate tailored follow-up recommendations. A Validation Agent independently reviews each recommendation and outputs a transparent confidence score out of 10. The two agents operate in a self-correcting workflow — the Validator is explicitly prompt-engineered to score the logic of the recommendation rather than simply confirm it.

- < 60s End-to-end analysis per patient, including dual-agent validation

- 10/10Transparent confidence score output for every recommendation

- 80+Visit longitudinal records processed without latency issues

Key engineering challenges solved

- Diagnostic ambiguity: when patient data is incomplete, the system generates multiple clinical scenarios rather than forcing a single conclusion

- Outlier safety: when a patient profile doesn't match the historical repository, the system triggers a Low Confidence fallback and routes the case directly to the ophthalmologist

- Longitudinal data retrieval: a custom search optimisation algorithm within Deerflow parses dense multi-decade records and returns verified results in under a minute

- Multi-agent synchronisation: context window fine-tuned to ensure the Validation Agent stays objective and doesn't simply echo the Diagnostic Agent's output

08 AI-Powered Dental Bite Classification from 3D Scans

Orthodontic diagnosis from 3D dental scans (STL files) has historically been manual, time-consuming, and highly dependent on the individual clinician's experience. In real clinical settings, patients rarely present with a single bite problem — a typical complex case may combine a Class II malocclusion with a Deep Bite and a Scissors Bite simultaneously. Identifying all co-occurring conditions consistently, across clinicians, is precisely the kind of problem where AI delivers structural value.

The system Neuramonks built goes beyond binary classification. It stages the primary bite category, detects all co-occurring conditions, validates that the detected combination is medically consistent, and outputs a ranked result with a confidence level per finding — all from a single STL upload.

Case Study: AI Dental Bite Classification System — Neuramonks

Neuramonks trained a multi-label classification model on 800+ real 3D dental scans, each verified by certified orthodontists, covering both simple and complex real-world cases including rare bite combinations. The system analyses the 3D geometry of a patient's dental scan, identifies the primary bite class (Class I, II div 1, II div 2, or III), flags all co-occurring conditions (Cross Bite, Open Bite, Deep Bite, Scissors Bite), validates the medical plausibility of the detected combination, and outputs a structured, confidence-ranked result — in seconds.

- 800+Verified 3D dental scans used for training

- 4+1Primary bite classes + 4 co-occurring conditions detected simultaneously

- 100%Medically validated output combinations — no anatomically impossible results

Example output — single patient scan

- Primary diagnosis Class II div 1Very High Confidence

- Co-occurring conditionDeep BiteHigh Confidence

- Co-occurring conditionScissors BiteGood Confidence

Clinical workflow

- The orthodontist uploads the patient's STL file into the system

- AI analyses 3D geometry, identifies the primary class and all co-occurring conditions

- Medical plausibility check validates the detected combination before the output is surfaced

- Structured, confidence-ranked result is presented instantly — used for treatment planning, patient communication, and cross-clinician consistency

09 Drug Discovery and Clinical Trial Access

Bringing a new drug to market costs roughly $2.6 billion and takes ten to fifteen years. Most candidates fail in late-stage trials after years of investment. AI is compressing both the timeline and the failure rate: protein structure prediction has resolved folding for millions of proteins, generative models propose novel molecular compounds with target properties, and biomarker analysis predicts which patients will respond to which therapies before the first dose.

Insilico Medicine identified a novel drug candidate for idiopathic pulmonary fibrosis in eighteen months — a process that would typically take four to five years. The compound entered Phase 2 clinical trials in 2023.

For patients with rare diseases or treatment-resistant cancers, clinical trial matching is often the most immediately practical AI application. These tools scan a patient's electronic record against thousands of active trial eligibility criteria in real time — surfacing opportunities that would require weeks of manual research by a specialist coordinator. For patients who have exhausted standard options, it can be the difference between access and invisibility.

Your clinical AI project starts with a clear problem statement.

The gap between knowing AI can help and actually deploying something that works in a clinical environment is where most projects stall. It is not a technology problem. It is a scoping problem, a data problem, or a workflow problem — and usually some combination of all three.

Neuramonks works with healthcare organisations at whatever stage they are at. Some come with a clear brief and need a team to build it. Others have a clinical problem they are not sure AI can solve and want an honest conversation before spending anything. Others are partway through a deployment that has lost momentum and need someone to pick it up.

We designs and delivers AI solutions purpose-built for healthcare — diagnostic imaging, pathology automation, wound assessment, clinical decision support, and more. Whether you are evaluating a first pilot or scaling across a hospital network, we help you move from problem to measurable outcome.

Reach out to the Neuramonks team here.

A free discovery call. No pitch, no deck. Bring the problem and we will give you a straight answer on what is feasible, what is not, and what it would realistically take.