TABLE OF CONTENT

Understanding Responsible AI Deployment & Why the Most Powerful Models Stay Behind Closed Doors

There are moments in AI development when the responsible choice is not to release a capability into the world, but to contain it. Project Glasswing, announced by Anthropic in April 2026, represents precisely this moment and it clarifies why Claude Mythos Preview, despite being Anthropic's most powerful unreleased model, will remain restricted to a carefully governed coalition rather than released to the general public.

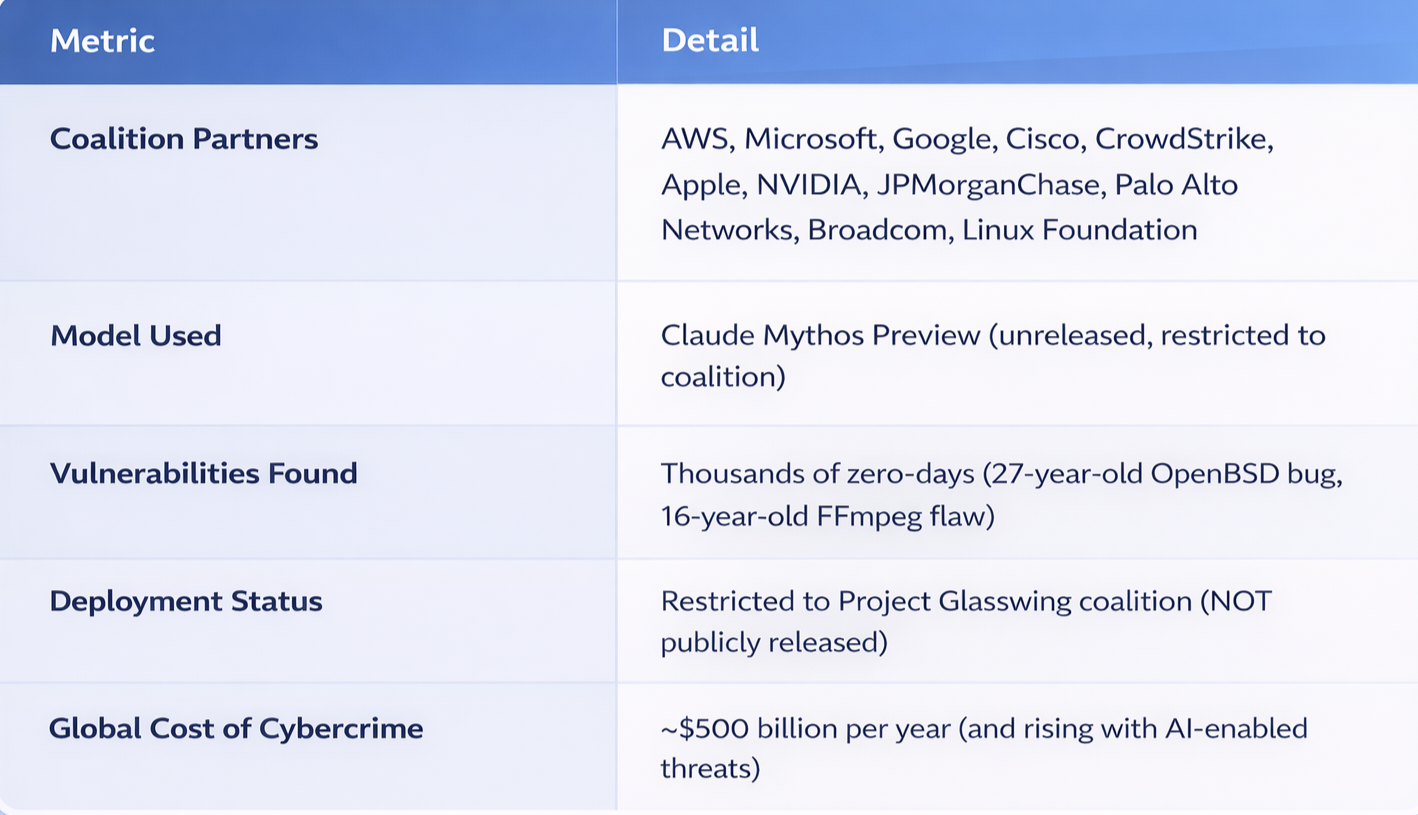

What started as an internal AI safety initiative evolved into Project Glasswing a cross-industry AI cybersecurity coalition that includes Amazon Web Services, Microsoft, Google, Cisco, CrowdStrike, Apple, NVIDIA, JPMorganChase, Palo Alto Networks, Broadcom, and The Linux Foundation. Glasswing came first. Claude Mythos Preview was deployed within Glasswing's structure second. That sequence is intentional, and it explains everything about why this model will not be freely available.

Whether your company operates in San Francisco, Chicago, Bangalore, or Hyderabad, this decision --- to restrict rather than release --- has direct implications for your security posture and your AI strategy.

Stop Planning AI.

Start Profiting From It.

Every day without intelligent automation costs you revenue, market share, and momentum. Get a custom AI roadmap with clear value projections and measurable returns for your business.

Project Glasswing Came First: The Governance Framework Before the Model

Project Glasswing is Anthropic's structured initiative to deploy frontier AI capabilities for defensive cybersecurity within a coordinated, accountable coalition. Named after the glasswing butterfly --- whose transparent wings let it hide vulnerabilities in plain sight --- the project brings together the world's leading technology companies under one shared mission: use AI to find software flaws before attackers do.

The critical point: Anthropic built the coalition, the governance structure, and the accountability framework first. Only then was Claude Mythos Preview deployed within it.

At the core is Claude Mythos Preview --- a general-purpose, unreleased frontier LLM that has already demonstrated the ability to identify zero-day vulnerabilities in every major operating system and web browser. In independent tests, Mythos Preview found:

- A 27-year-old bug in OpenBSD

- A 16-year-old vulnerability in FFmpeg that had survived five million automated test cycles

- Chained multiple Linux kernel flaws to escalate user access to full machine control

These were not theoretical exercises. These were real flaws in systems that run banks, hospitals, government infrastructure, and supply chains globally. And a model that can find these flaws faster than any human or existing automated system poses a problem if released without guardrails.

Why Claude Mythos Won't Be Released to the Public

The same capabilities that make Claude Mythos exceptional for defensive cybersecurity make it dangerous if deployed indiscriminately. Here's why Anthropic has chosen containment over release:

1. Offensive Capability Asymmetry

A model that can autonomously find and exploit vulnerabilities across every major operating system on Earth is a dual-use technology in the most literal sense. The same agentic reasoning that locates zero-days can be weaponized by state actors, criminal organizations, or any individual willing to skip the accountability layer. Releasing Mythos to the public would be equivalent to distributing advanced exploit development tools to billions of people worldwide.

Cybercrime costs the global economy approximately $500 billion per year. That number would multiply exponentially if a frontier AI model capable of autonomously discovering critical vulnerabilities became widely available. Anthropic knows this. Which is why the model stays restricted.

2. Disclosure Coordination Requires Governance Structure

When a vulnerability is found, the question of when and how it is disclosed determines whether patches reach systems before or after attackers exploit them. A 27-year-old bug in OpenBSD is only useful if fixed before the world learns about it. The same applies to every zero-day Mythos discovers.

If Claude Mythos were publicly available, coordinated disclosure would become impossible. The first independent user to find a critical vulnerability could:

- Sell it on the dark web

- Use it for offensive operations

- Share it with adversaries

Project Glasswing prevents this by concentrating the findings within a coalition where The Linux Foundation, AWS, Microsoft, Google, and other trusted organizations control the flow of information and coordinate patches before public disclosure.

3. Open-Source Ecosystem Protection

The Linux Foundation is a core partner in Glasswing precisely because the vulnerabilities Mythos finds often exist in open-source codebases that billions of people depend on. If the model were public, every malicious actor with technical skill would use it to scan the same repositories looking for exploitable flaws.

By keeping Mythos restricted to Glasswing, Anthropic ensures that vulnerability discoveries flow through coordinated channels and that fixes reach the open-source community before threats materialize. This is structural cybersecurity --- not a restriction on innovation, but a prerequisite for public safety.

4. The Offensive-Defensive Gap Collapses Instantly with Public Release

As of now, there is a temporal advantage to defensive systems. Glasswing's coalition has access to Mythos's capabilities weeks or months before any attacker independently discovers similar techniques. That window is what allows patches to reach systems before exploits do.

The moment Mythos becomes publicly available, that advantage evaporates. Attackers gain the same autonomous vulnerability-finding capability that defenders do. The asymmetry reverses. And because there are more malicious actors than well-resourced security teams, the defensive effort loses.

The Sequence Matters: Why Glasswing Had to Come First

Anthropic did not build Mythos Preview and then figure out what to do with it. They built the coalition, the structure, and the accountability framework first --- and then deployed the model inside it. That order is the entire point.

For most of AI's history, powerful capabilities were released first and consequences were managed afterward. The internet deployed before misinformation frameworks existed. Social media scaled before anyone understood its effects on public discourse. Large language models were released to the public before anyone seriously thought about systemic harms.

With Claude Mythos, Anthropic inverted that pattern. They asked: "What structure must exist for this capability to be deployed responsibly?" The answer was Project Glasswing. Only after that structure was built was the model deployed within it.

This means:

- Findings flow through coordinated disclosure protocols

- The Linux Foundation is a core partner, ensuring fixes reach open-source ecosystems

- No single company controls the output; accountability is distributed

- The model's power is matched by the responsibility of the structure around it

What This Means for Enterprises in 2026

If you lead technology, security, or operations at an enterprise --- whether in New York, Austin, Mumbai, or Pune --- here is the practical reality:

- Your attack surface is larger than any manual team can cover. The world's largest technology companies are actively deploying Frontier AI vulnerability detection.

- Open-source dependencies are a critical risk vector. Project Glasswing is directly funding security work on the codebases your infrastructure depends on.

- AI in healthcare, finance, and logistics creates regulatory compliance risk. As AI embeds deeper into critical systems, security standards must rise proportionally.

- Cloud providers are integrating advanced security capabilities. AWS Bedrock, Google Cloud Vertex AI, and Microsoft Foundry now provide access to enterprise-grade AI security tools.

Agentic AI & LLMs: Why Frontier Models Require Careful Deployment

What makes Claude Mythos fundamentally different from conventional security tools is its agentic AI architecture. Agentic AI refers to systems that can autonomously plan, execute, and iterate across multi-step tasks without human steering at each stage.

Rather than simply flagging suspicious patterns, Mythos reads entire codebases, reasons about logic flows, chains vulnerabilities together, and develops working exploits --- all on its own. This agentic capability is precisely why it found vulnerabilities that survived decades of human review and millions of automated tests.

On benchmark evaluations like SWE-bench Verified, Mythos Preview scored 93.9% --- the highest ever recorded for any model --- demonstrating that its underlying LLM capabilities extend well beyond simple question-answering into deep, autonomous software reasoning.

Precisely because of these capabilities, the model cannot be publicly released without unacceptable security risks. The power to autonomously exploit systems must be paired with governance structures that prevent misuse. Project Glasswing is that structure.

MCP Servers: The Hidden Infrastructure Enabling Secure Deployment

For Claude Mythos Preview to perform vulnerability detection at the depth it does --- scanning live codebases, navigating private repositories, querying internal toolchains --- it needs more than intelligence. It needs secure, structured access to external systems.

This is where the Model Context Protocol (MCP), developed by Anthropic, becomes critical. MCP is an open protocol that defines how AI models connect to external tools, data sources, and secure environments. Think of MCP servers as the intelligent connectors that allow a model like Claude to safely interact with your company's private codebase, internal databases, security scanners, or enterprise APIs --- without exposing raw credentials or unstructured data to the model directly.

MCP servers are also why Mythos can be deployed within Glasswing without leaking sensitive vulnerability data outside the coalition. The protocol ensures that findings are structured, auditable, and controlled.

NeuraMonks: AI Development Company Building Tomorrow's Autonomous Systems

NeuraMonks: AI Development Company

At NeuraMonks, we design and deploy agentic AI workflows that help enterprises across the US and India automate complex operations securely. While Anthropic deploys frontier models within carefully governed coalitions, NeuraMonks builds the same class of intelligent, multi-step autonomous workflows for your business infrastructure. Whether you need Agentic AI Development code review, document intelligence, data processing, or security automation --- we architect these systems with governance and accountability built in from the start. We also specialize in MCP Server Development, creating the custom connectors that allow frontier AI models to securely interact with your company's private tools, data environments, and software infrastructure.

→ Learn about our Agentic AI Development Services

The principle behind Glasswing --- structure before scale, governance before deployment --- is how we approach every AI system we build. We design the accountability layer in from the beginning: access controls, output validation, human review checkpoints, and audit trails. Because the right order matters just as much as the right model.

The US-India Context: Two Economies at the Center of This Shift

For US-based businesses, Anthropic's decision to restrict Claude Mythos is both a warning and a competitive signal. The US government has been briefed on Mythos's capabilities. Anthropic has stated explicitly that the US and its allies must maintain a decisive lead in frontier AI --- and that Project Glasswing is part of ensuring democratic institutions do not fall behind state-sponsored actors. Releasing the model to the public would directly undermine that strategic advantage.

For Indian enterprises, the stakes are equally high. India's digital economy is expanding rapidly, with businesses across BFSI, healthcare, and manufacturing adopting AI solutions at scale. India is also one of the world's largest hubs for software development and open-source contribution --- the same codebases Glasswing is now scanning for vulnerabilities are being written and maintained in part by Indian engineers.

For both markets, the message is clear: the most powerful AI models will be deployed through carefully structured coalitions, not released to the general public. Cybersecurity posture, regulatory compliance, and competitive positioning now depend on understanding this new reality.

Project Glasswing at a Glance

Building Secure AI Systems for the Restricted-Model Era

At NeuraMonks, we recognize that the frontier of AI is no longer about building models --- it's about building the governance structures that allow powerful models to be deployed responsibly. The model era is becoming the coalition era.

As enterprises across the US and India navigate this transition, the question is not whether to use frontier AI. Project Glasswing has already made that decision for you. The question is how to integrate these capabilities into your security and operational posture while maintaining the same governance standards.

We work across these layers:

- Agentic AI development for autonomous workflows

- LLM integration for enterprise AI products

- MCP server development for secure model integration

- AI security and governance frameworks

Ready to Build Secure AI Systems?

The AI era demands more than automation --- it demands intelligence built securely from the ground up. Frontier models like Claude Mythos are being deployed through structured coalitions.

Your business needs to be ready. NeuraMonks is an AI development company that helps enterprises across the US and India design, develop, and deploy intelligent systems that are both powerful and accountable.