TABLE OF CONTENT

When the market is moving this fast, the hardest thing for a technology leader to say is: "I'm not sure this spend is justified."

AI investment crossed $200 billion globally in 2024. GPU clusters have had multi-week waitlists. Every major cloud vendor has doubled its AI services portfolio. And yet according to McKinsey only 31% of enterprise AI deployments report clear, measurable return on investment.

That gap is worth sitting with. Not to dismiss AI the technology is genuinely transformative when deployed correctly but to ask the question that separates thoughtful infrastructure decisions from momentum-driven ones:

Are we spending on AI because it solves a specific problem we have or because everyone else seems to be spending on AI?

This piece is for the CTO, the VP of Engineering, the founder who is fielding three vendor pitches a week and trying to figure out which ones are worth a second conversation. It is not a debate about whether AI works. It is a framework for deciding where it earns its cost.

The Bubble Is Real Just Not Where Most People Think

Bubble dynamics in technology rarely mean the underlying technology is worthless. The dot-com bust did not disprove the internet. What it destroyed were companies building on top of hype without a path to revenue. The same pattern is visible in parts of the AI landscape today.

Where the bubble is most inflated:

- Foundation model startup valuations — many are priced on potential, not on revenue or margin

- Generative AI SaaS tools — a crowded market where differentiation is thin and churn is high

- GPU compute contracts — locked in at 2024 peak pricing before commodity correction arrived

- Custom LLM projects sold by consultancies at $300K+, where a $40K integration would do the same job

Where the bubble is not:

AI applied to a specific, measurable process document processing, scheduling, customer communication, anomaly detection

Automation infrastructure connecting AI capabilities to systems you already run

Computer vision in environments where human inspection is slow, expensive, or inconsistent

The difference between these two categories is not the sophistication of the technology. It is the presence or absence of a defined business outcome before the first line of code is written.

Stop Planning AI.

Start Profiting From It.

Every day without intelligent automation costs you revenue, market share, and momentum. Get a custom AI roadmap with clear value projections and measurable returns for your business.

How Infrastructure Spend Goes Wrong: The Three Failure Modes

Most AI infrastructure failures do not fail because of technical problems. They fail for one of three reasons:

1. Capability first procurement

A vendor demonstrates what AI can do and the organisation buys the capability without first identifying the problem it is solving. The result is infrastructure that impresses in demos and sits underused in production.

2. Complexity inflation

Vendors have a structural incentive to propose more complex solutions. A custom trained model is a bigger contract than an API integration. A bespoke data pipeline is a bigger engagement than a well-configured automation workflow. Complexity is often sold as quality when it is actually just cost.

3. Underestimating adoption cost

Technology adoption in organisations is not primarily a technical challenge it is a change management challenge. The most common cause of AI project failure is not that the model performs poorly. It is that no one changed the workflow around it. Implementation cost is routinely planned at 100% of development cost when 30% goes toward adoption; the real figure is usually reversed.

What Good AI Infrastructure Investment Looks Like in Practice

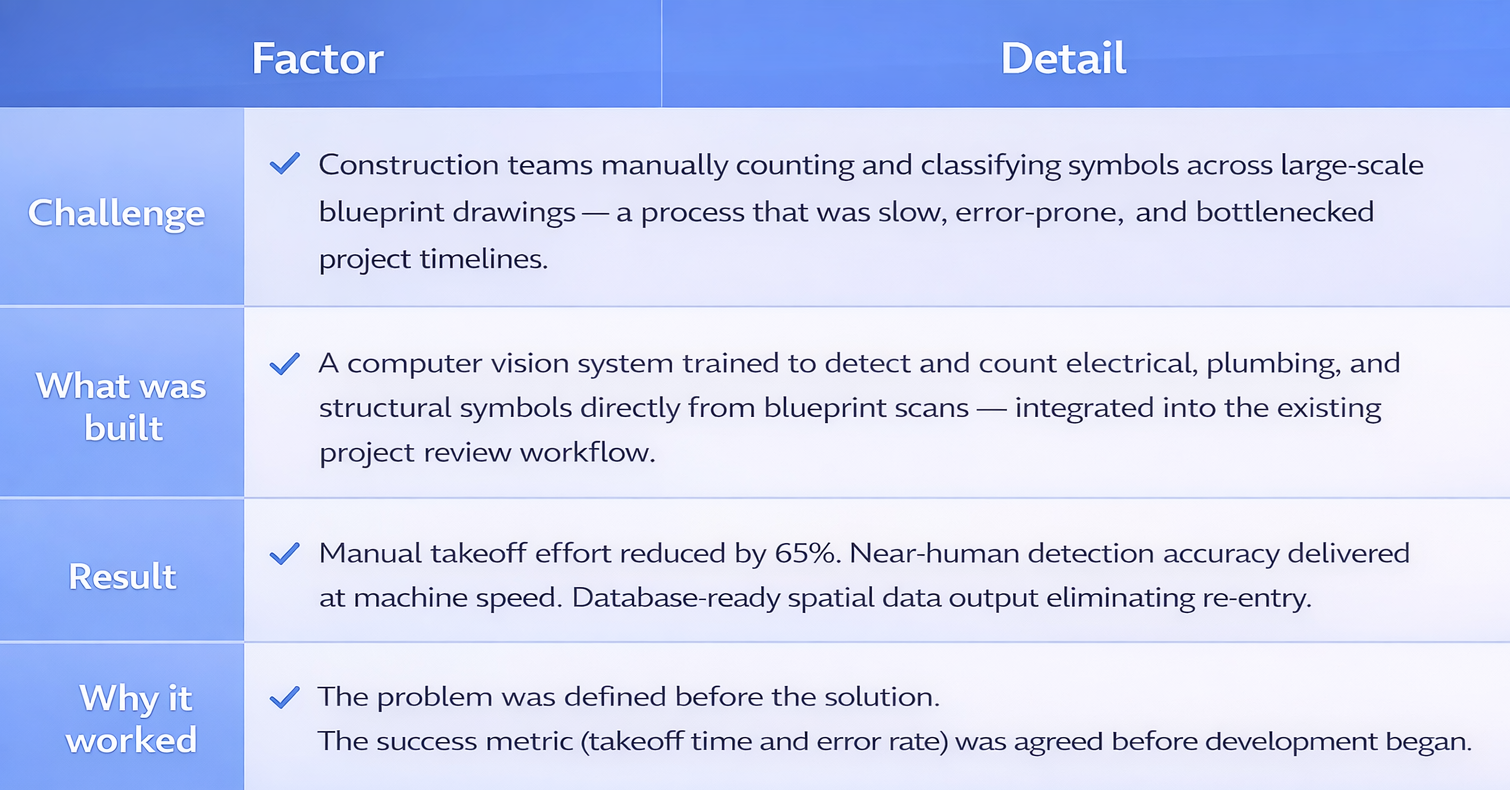

One of the clearest illustrations of this comes from the construction sector an industry not typically associated with early technology adoption, but one that has started deploying AI with measurable discipline.

AI in construction is increasingly applied to blueprint analysis, materials takeoff, site safety monitoring, and progress reporting. What makes these deployments work is the same thing that makes any AI investment work: there is a physical, measurable problem with a known cost, and the AI addresses it directly.

Case Study — AI-Powered Symbol Detection for Construction Blueprints

This type of project focused, outcome-defined, integration-first is what distinguishes AI deployments that generate ROI from those that generate reports. NeuraMonks has built a portfolio of similar engagements across healthcare, fintech, e-commerce, and operations. You can review them at neuramonks.com/ai-case-study.

The Audit Question Every CTO Should Ask Before Approving AI Spend

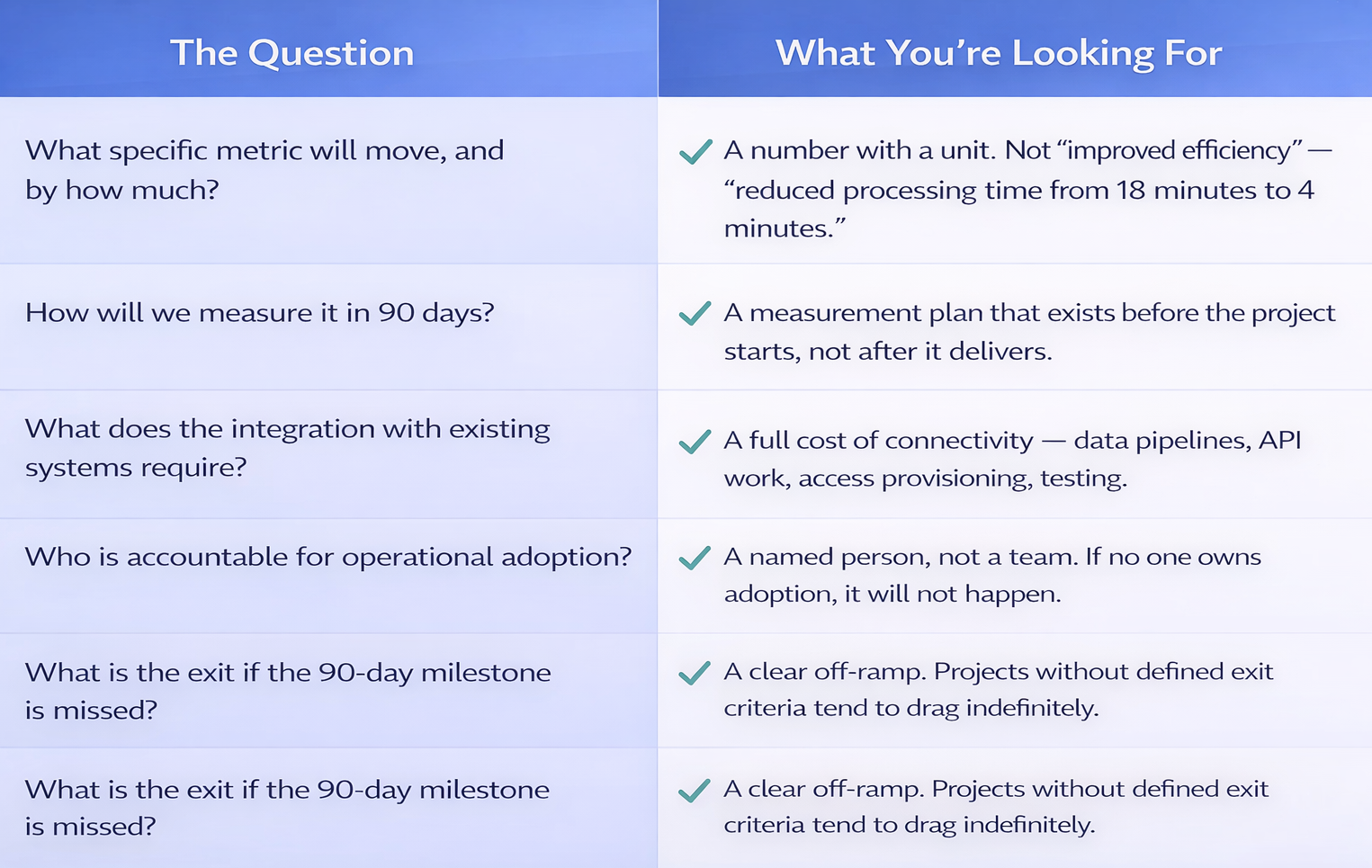

Before any AI infrastructure proposal reaches final approval, it should be able to answer five questions clearly. If any answer is vague, the proposal is not ready.

These five questions are the difference between an AI strategy and an AI budget. The first produces outcomes. The second produces invoices.

Where Workflow Automation Fits the Infrastructure Stack

One of the most consistently underused layers in enterprise AI deployments is workflow orchestration the connective tissue that makes AI outputs usable inside existing operations. Tools like n8n enable teams to connect AI capabilities (document processing, classification, generation, extraction) to the systems where decisions are actually made: CRMs, ERPs, communication platforms, reporting pipelines.

This layer is where practical ROI happens fastest. It does not require custom model training. It does not require new infrastructure. It requires a clear understanding of which processes produce the most friction and a competent team to bridge the gap.

The organisations that are getting AI right in 2025 are not the ones with the largest model infrastructure. They are the ones that have mapped their highest-friction processes and built tight automation pipelines around them. The technology is secondary to the operational thinking.

What a Thoughtful AI Investment Looks Like in 2025

The organisations that are consistently getting ROI from AI share a pattern. They are not necessarily the heaviest spenders. They are the most deliberate:

- They start with an audit of existing processes before evaluating any new AI capability

- They match solution complexity to problem complexity and resist vendor pressure to over-engineer

- They deploy in 90-day cycles with defined KPIs, treating each cycle as a go/no-go decision

- They cost adoption at 20–30% of total implementation budget, not as an afterthought

- They treat AI solutions as operational infrastructure, not R&D experiments which means ownership, maintenance plans, and performance monitoring from day one

NeuraMonks works with organisations across India, the UAE, and the US to audit AI readiness, design deployment roadmaps, and build AI solutions that are scoped and priced against outcomes. The work spans AI consulting, proof-of-concept development, full product builds, and ongoing automation engineering.

If the Infrastructure Question Is Unresolved, It Is Worth Resolving

The AI bubble is real in some places and absent in others. The difference is almost always whether the organisation started with a problem or started with a product. If you are currently evaluating AI infrastructure spend or reviewing a proposal that has been sitting on your desk the most valuable thing you can do before committing is to get clarity on what outcome you are actually buying.

NeuraMonks offers AI consulting, audit engagements, and end to end development for organisations that want that clarity. We build AI solutions that are scoped against specific outcomes and delivered in cycles short enough to validate before they scale.

Talk to the NeuraMonks Team

Discuss your current AI spend, an upcoming project, or a proposal you want a second perspective on.

Review Our Case Studies

See how we have approached real problems across construction, healthcare, e-commerce, and more.

→ neuramonks.com/ai-case-study